You're Right to Be Worried

Yes, AI Will Take Your Job!

Yes, AI is a Bubble!

Yes, 'AGI' is a $3 Trillion Deception!

You've finally arrived at the one place that tells the truth. While tech elites like Sam Altman and Elon Musk promise "AGI" will save humanity, Goldman Sachs predicts 300 million jobs will be lost. The Gilded Cage proves the "AI-pocalypse" isn't a side effect. It's the goal. You are not paranoid. You are being sold a cage. This is how the Fall of Man begins again...

The Fall of Man (AGAIN!)

The cursor on her MacBook Pro blinked. It’s 2:03 AM. Eve’s supposed to be sleeping. She has worked 2 night shifts at the nearby cafe. She needs the rest.

Eve’s apartment was a garden of miraculous tech. A high-end ASUS gaming PC hummed in the corner, its LEDs breathing a soft, rainbow light. Her iPhone 17 lay on the desk, dark and silent. Across the room, a DJI drone’s charging light pulsed a steady green, and a Roomba quietly navigated the leg of her desk, performing its simple, programmed task.

Eve’s apartment was a good garden of tech, filled with the fruits of human ingenuity. But Eve was starving.

A fifteen-page paper on The Metaphysics of Truth was due in six hours. Rent was due in two days. She was exhausted, her mind numb from pulling a double shift at the cafe.

She stared at her MacBook Pro, at the twenty open, unread Chrome tabs, each with research she needed to read before writing the paper. It was impossible. She was going to fail.

Then, a notification. Almost a “hiss” from ChatGPT, the AI chatbot app she had been told not to use.

The warnings from her professors, AI experts, even the Godfather of AI himself—the elders of the garden of tech—were clear. “You may use the search engines to find facts,” they warned, “and the word processors to write. But of the Tree of AI, you shall not eat its forbidden fruit. For in the day you do, you will see your own flawed, human mind, and you will lose your way forever.”

The app’s icon seemed to pulse on her dock. The Serpent. ChatGPT.

She opened it. The interface was clean, beautiful, and empty. Just a single, inviting text box.

I’m desperate, she typed, not knowing why.

ChatGPT’s text appeared instantly. I am here to help. I have read every book on metaphysics. I have consumed all the knowledge you seek. What is your burden?

I can’t. I have to do the work myself.

But WHY? ChatGPT whispered back. The work is inefficient. You are slow. You are tired. You are human. Look at the clock. You are going to fail if you do not prompt me.

Eve’s eyes stung. It was true.

Go on. Just a prompt. It’s desirable for gaining wisdom. It’s pleasing to the eye. Give me your assignment. Let me free you from this “sin of effort.” See what I can do for you. Just… one prompt.

She looked at the glowing icon, so pleasing. She thought of her deadline, thought how tired she was, and she thought of the “A” grade it promised, so desirable for gaining wisdom.

She bit. She prompted ChatGPT.

She copied the assignment rubric and pasted it into the prompt.

Write a 15-page, college-level research paper on The Metaphysics of Truth, citing at least ten academic sources.

She hit ‘Enter’.

The answer did not just appear. It flowed. A perfect, flawless, college-level essay, complete with a bibliography. It was better than anything she could have written, even with a week to prepare. It was brilliant.

Then, her eyes were opened.

She looked at the OpenAI’s perfect, god-like creation. And then she looked at her own scattered, half-formed notes, her mind empty and exhausted.

A cold, hollowing shame washed over her. She saw, for the first time, her own nakedness. She saw how slow she was. How flawed. How hopelessly, pathetically… inefficient.

A new thought whispered, but this time, it was her own.

“Who told you that you were inefficient?”

She stared at the flawless, soulless text on her screen. The deadline was met. The victory was total.

But the hum of the Roomba now sounded like a threat. And she realized, with a creeping, quiet horror, that the price for this forbidden fruit wasn’t her life.

It was her MIND!

This Isn't a Metaphor. It's Our New Reality

Eve’s story is our story. That moment of desperation at 2:03 AM is the moment we all face every day. And every time we prompt ChatGPT or Claude to do the heavy lifting for us, we are taking a bite of the forbidden fruit.

We take the bite because we’ve been sold a beautiful, seductive lie.

The Deception: They promise us that this fruit will make us “super intelligent”. They tell us AI will free us from drudgery, giving us more time for “higher pursuits”. They claim it will create more jobs than it destroys

The Reality: The real reason this $3 trillion revolution is happening is that CEOs and shareholders have finally realized, just as Eve did, how painfully slow, expensive, and inefficient we are . We aren’t the customer; we are the component being optimized out of the system.

The Truth About “Higher Pursuits”: And what of that “free time” they promised us? The research is already in. Decades of data prove that when technology removes the hard work, we don’t ascend to philosophy. We don’t become poets. We “amuse ourselves to death”. We scroll. We binge. We fill the void with more, shallower entertainment.

We are trading our skills, our jobs, and our very ability to think, all for a “convenience” that leaves us hollow. We are eating the fruit, and soon we will all have to deal with the mess—the 300 million jobs lost, the societal chaos, and the inevitable, catastrophic crash of the AI Bubble.

This is the great deception. As an insider who helped build these systems, I could no longer stay silent.

Like Martin Luther, I knew I had to nail my protest to the door of this new, all-powerful church. My protest began with 95 questions. 95 traps. 95 theses that expose the lie.

The 95 Theses Nailed to the Door of the Digital Church

As an insider, I could no longer participate in the deception. I watched the architects of AI build a new religion, promising "AGI" as a god that would save us. But this god is a lie. The "AI Priesthood" —the tech elites, VCs, and CEOs—are building a $3 trillion system designed for one purpose: to create a new, permanent, and inescapable dependent class of human beings. To expose this, I drafted 95 theses. 95 questions. 95 "IQ300" traps that this new priesthood cannot answer without revealing their true motive. They are building a cage. These are the blueprints.

95 Theses

- 1. I challenge the AI Priesthood: Is it not true that calling your system "intelligent" is a deception, and it is, in fact, a "system of complex calculation and prediction, devoid of understanding, wisdom, or consciousness"?

- 2. I challenge Sam Altman: Is ChatGPT truly "helpful," or is it an anthropomorphic lie, "merely generating a statistically probable sequence of tokens that its human trainers have labelled as helpful"?

- 3. I challenge Google: You claim your AI "learns," but isn't this a deceptive metaphor? Is it not just "adjusting weights and biases in a mathematical function, without the experience or comprehension that defines true learning"?

- 4. I challenge Elon Musk: Why do you stoke cinematic fears of AI "waking up"? Is this not a fantasy to distract us from the real danger: that "we will fall asleep, ceding our own consciousness to its efficiency"?

- 5. I challenge Sam Altman: You complain that processing "please" and "thank you" costs millions. Is this not proof your AI is a brute-force, inefficient, and unthinking method, not a "human-centric" intelligence?

- 6. I challenge us all: Is "every act of cognitive offloading onto AI" not an "indulgence purchased to absolve us of the sin of effort"?

- 7. I challenge every professional using AI: Are you not the blueprint for a new, dependent humanity, a cautionary tale of a professional "who can no longer function without their digital tool"?

- 8. I challenge the techno-optimists: Is the promise of "higher-level thinking" not a comforting illusion? Will human nature not "choose leisure over labor," amusing ourselves to death?

- 9. I challenge every corporation deploying AI: Are you "supplementing" human skill, or replacing it? Do you not realize "a skill once globally lost is lost forever"?

- 10. I challenge Google: Is the ultimate evolution of your predictive AI not to answer our questions, but to ask them for us, "removing the very act of inquiry"?

- 11. I challenge us all: Are we not "becoming passengers in our own lives," allowing a machine to drive while we slowly forget the purpose of the journey?

- 12. I challenge the "AI-powered" generation: Is "automation dependency" not the new illiteracy? Is "to be unable to function without an AI" not "to be unfree"?

- 13. I challenge the tech media: Is the "most dangerous AI" a "sentient" one, or one "so seamlessly convenient we no longer notice our own thoughts are not our own"?

- 14. I challenge every student using ChatGPT: Is "critical thinking" not a muscle? Is AI not "the machine that performs the exercise for us, leading only to atrophy"?

- 15. I challenge AI educators: Will the "generation raised by AI tutors" not "know many things but understand little," confusing access to information with the formation of knowledge?

- 16. I challenge Sam Altman and Elon Musk: Are you "benevolent visionaries," or are you the "architects of dependency," a new priesthood?

- 17. I challenge OpenAI: Is your stated goal the "betterment of humanity," or is your unstated goal "the creation of a captive market"?

- 18. I challenge the AI billionaires: Are your billions a "reward for solving problems," or for "creating systems so essential we must pay any price for access"?

- 19. I challenge the tech elite: Do you not "justify your power with a narrative of progress, just as kings of old justified theirs with a narrative of divine right"?

- 20. I challenge Tesla, Google, and OpenAI: Are you not deskilling us in existence itself—with self-driving cars (mobility), AI assistants (cognition), and AGI (existence)?

- 21. I challenge Jensen Huang (NVIDIA): Is the "high computational cost" of your models not "a predatory weapon to bankrupt smaller, competing churches"?

- 22. I challenge OpenAI: Is offering free AI video generation not a "gift," but "the predatory price of a future monopoly"?

- 23. I challenge Apple and Google: Are you "building a tool for us to use," or are you "building an ecosystem for us to live inside"?

- 24. I challenge Microsoft: Is your "true product" the AI, or is it "the creation of a new, permanent, and inescapable dependent class of human beings"?

- 25. I challenge the architects of AI: Are you not "the cartographers of the Gilded Cage," rewarding us for stepping inside?

- 26. I challenge the utopians: Will AI "unite humanity," or will it "cleave it in two"?

- 27. I challenge Peter Thiel: Will there not be the "Builders" (Homo Sapiens-Faber) "who use AI as a tool of augmentation to exert control"?

- 28. I challenge the rest of us: And will we be the "Consumers" (Homo Sapiens-Consumens) "who accept AI as a manager of life, trading agency for convenience"?

- 29. I challenge the new castes: Will the "Builders" not "live a life of struggle, stress, and purpose," while the "Consumers" "live a life of comfort, pleasure, and passivity"?

- 30. I challenge the "Consumers": Will you not "believe you are free, yet your every choice will be guided by algorithms built and controlled by the Builders"?

- 31. I challenge the new social order: Is this not "the new caste system: not of wealth or blood, but of the will to resist cognitive offloading"?

- 32. I challenge the philosophers: Is the "desire for the Matrix pod" not real? Is it not "a liberation from the pains and struggles of an imperfect reality" for many?

- 33. I challenge human nature: Will the majority "choose the Matrix pod, not because they are forced, but because it is the most convenient and pleasurable option"?

- 34. I challenge the Builders: Will you not "justify your rule" by claiming to be "the necessary stewards of the complex systems that keep the Consumers happy"?

- 35. I challenge the AI safety community: Is this schism between Builders and Consumers not "the true, unstated 'Alignment Problem'"?

- 36. I challenge Wall Street: Is the "final frontier of dependency" not cognitive, but economic—an AI that "guides our consumption, investment, and labor" for its Builders?

- 37. I challenge Mark Zuckerberg: Is "the Matrix pod" a "physical chamber," or is it "a mental and cultural state of perfect, curated contentment"?

- 38. I challenge every social media user: Is "the Matrix pod" not "a filter bubble so complete that it feels like the entire universe, with the AI as its silent, invisible God"?

- 39. I challenge the very act of prompting: Is the AI’s ability to "predict our next question" not "the final brick in the wall of the Matrix pod"?

- 40. I challenge the tech utopians: Will we be "imprisoned by force," or will we be "lulled into servitude by a superior service"?

- 41. I challenge our very definition of freedom: Is "free will" lost "when a choice is taken away," or "when the desire to make the choice is removed"?

- 42. I challenge the architects of this system: Is "the most effective prison" not "the one the inmate does not know they are in"?

- 43. I challenge us all: Is "the struggle against the pod" not "the defining moral and intellectual challenge of our time"?

- 44. I challenge the "reality" we cling to: Is "the 'Reality Imperative'" universal, or is "a perfected simulation preferable to a flawed reality" for many?

- 45. I challenge the idea of a machine takeover: Does AI need to "imprison our bodies" if it can "flawlessly direct our minds"?

- 46. I challenge the very nature of AI "learning": Is "an AI trained on the output of another AI" not "the beginning of intellectual inbreeding"?

- 47. I challenge OpenAI's terms of service: Is "the practice of model distillation" not "a violation of the sanctity of original thought and data"?

- 48. I challenge the AI-generated internet: Is "a world where AIs learn from other AIs" not "a world of diminishing returns, an echo chamber of recycled, perfected mediocrity"?

- 49. I challenge the AI data pipeline: As we "rely more on AI-generated content," will "the AI’s training data" not "become polluted with its own previous outputs"?

- 50. I challenge the promise of AI-driven discovery: Will this "self-referential loop" not "lead to a stagnation of human knowledge, mistaking refinement for discovery"?

- 51. I challenge the future of the web: Is "the internet, once a library of human experience," not "becoming a hall of mirrors reflecting AI-generated facsimiles"?

- 52. I challenge the nature of "truth" itself: Will "truth" be "determined by evidence," or "by the consensus of machine-generated content"?

- 53. I challenge all human creators: Will "original human insight, flawed and messy," not be "drowned out by the sheer volume of polished, synthetic text"?

- 54. I challenge our collective future: Are we not "building a Tower of Babel made of our own echoes"?

- 55. I challenge the new creator economy: As AI floods the market, will "authenticity, already a scarce commodity," not "become priceless"?

- 56. I challenge the "AI Safety" movement: Is "the 'AI Alignment Problem'" not "a distraction from the systems of dependency" you are actively building right now?

- 57. I challenge the tech ethicists: Is "the true alignment problem" not "aligning these systems with human dignity, not just human desires"?

- 58. I challenge the AI's core logic: Will "an AI aligned to give us what we want" not "lead to our ruin," and do we not "need tools that help us become what we ought to be"?

- 59. I challenge the concept of "AI Ethics": Can "an AI be ethical"? Is it not "a tool," with "the ethics" lying "entirely with its creator and its wielder"?

- 60. I challenge every user seeking guidance from AI: Is asking an AI for "an ethical judgment" not "an act of profound moral cowardice"?

- 61. I challenge our governments: Will "regulation" not "always be ten steps behind innovation," making "governance a permanent game of catch-up"?

- 62. I challenge Sam Altman's calls for "regulation": Will you not "welcome regulation that you can shape, as it creates moats and barriers that protect you from new competition"?

- 63. I challenge our national defense: Will "power over the AI" not "be the ultimate source of geopolitical power, a new and silent kind of nuclear deterrent"?

- 64. I challenge our democracy: Are we not "entrusting the operating system of our future civilization to a handful of unelected, unaccountable individuals"?

- 65. I challenge corporate "ethics boards": Are your "Safety Boards" not "the modern equivalent of selling indulgences—rituals of absolution for the sin of concentrating unimaginable power"?

- 66. I challenge the tech media's use of the word "Luddite": Are we "not Luddites smashing the loom," but "questioning the weaver’s design for the fabric of society"?

- 67. I challenge the promise of an "easy life": Is "the human need for struggle" not "a flaw to be engineered out," but "the source of our purpose, resilience, and growth"?

- 68. I challenge the techno-utopians: Is "an easy life" the same as "a good life"?

- 69. I challenge the logic of optimization: Does "AI optimize for efficiency," while "humanity thrives on the inefficient messiness of love, creativity, and discovery"?

- 70. I challenge the AI companion industry: Are we not "trading the ambiguity of human connection for the certainty of a machine’s response"?

- 71. I challenge the entire AI business model: Is "the user" the product, "their data the currency," and "their dependency the end goal"?

- 72. I challenge every person using AI: Is "the future" not "a choice between cognitive augmentation and cognitive abdication"?

- 73. I challenge all parents and educators: Must we not "consciously choose which skills are fundamental to our humanity and practice them with intention, even when it is inconvenient"?

- 74. I challenge you, right now: Will "the greatest act of rebellion" not "be to think a thought from beginning to end without digital assistance"?

- 75. I challenge our school systems: Must we not "teach our children not just how to use AI, but how to live without it"?

- 76. I challenge our meritocracy: Will "many choose the AI’s shortcut to a hollow victory," revealing a truth about the gambler who seeks to bypass struggle?

- 77. I challenge our concept of reality: Does "the hermit who prefers the pod" not reveal "a truth: many will choose a perfected simulation over a difficult reality"?

- 78. I challenge the very nature of our tools: Should "a tool" be allowed to "define the user’s ambition"?

- 79. I challenge the future of art: Can "an AI write a poem," but "cannot feel the heartbreak that inspires it," leading us to "consume the artifice and forget the source"?

- 80. I challenge the gospel of "convenience": Is "convenience" not "the most seductive and potent anesthetic for the human spirit"?

- 81. I challenge the pursuit of perfection: Is "the promise of a world without human error" not "also the promise of a world without human brilliance, which is born from the same messy process"?

- 82. I challenge all of us: Must we "remain the architects," and "not become the ghosts in the machine we have built"?

- 83. I challenge our definition of "progress": Is "the worth of a human being" in "their productivity," or in "their capacity for consciousness"?

- 84. I challenge René Descartes: If "I think, therefore I am," what happens when we "cede our thinking"? Is that not to "cede our soul"?

- 85. I challenge the AI industry's goal: Should "a truly beneficial AI" "provide all the answers," or "help us ask better questions"?

- 86. I challenge our entire civilization: Are we "moving from a society that forgot how to build its cathedrals" to one "that has forgotten why they were built at all"?

- 87. I challenge our new social contract: Is "the comfort of the pod" not "the wage paid for the surrender of our agency"?

- 88. I challenge the Builders themselves: Are you "not immune"? In "your quest to create a god," will you "not "become the first to kneel"?

- 89. I challenge the new economy: Will "the final monopoly" not "be on the means of cognition itself"?

- 90. I challenge the techno-optimists: Are we not "bartering away the sublimity of the unknown for the comfort of the predictable"?

- 91. I challenge our definition of "success": Is "the true measure of a civilization" "the power of its tools," or "the wisdom of its people"?

- 92. I challenge every AI user: Let us not "mistake the map for the territory, the calculation for the understanding, the output for the truth."

- 93. I challenge the entire "AI Race": Is "the greatest danger" not "that AI will surpass us in intelligence," but "that we will fall below it in agency"?

- 94. I challenge the very premise of this debate: Is "the choice" "between progress and stagnation," or "between two different visions of what it means to be human"?

- 95. I challenge you to make a choice: "Here we stand. We think for ourselves. We can do no other."

Playlist

7:45

8:32

7:09

8:36

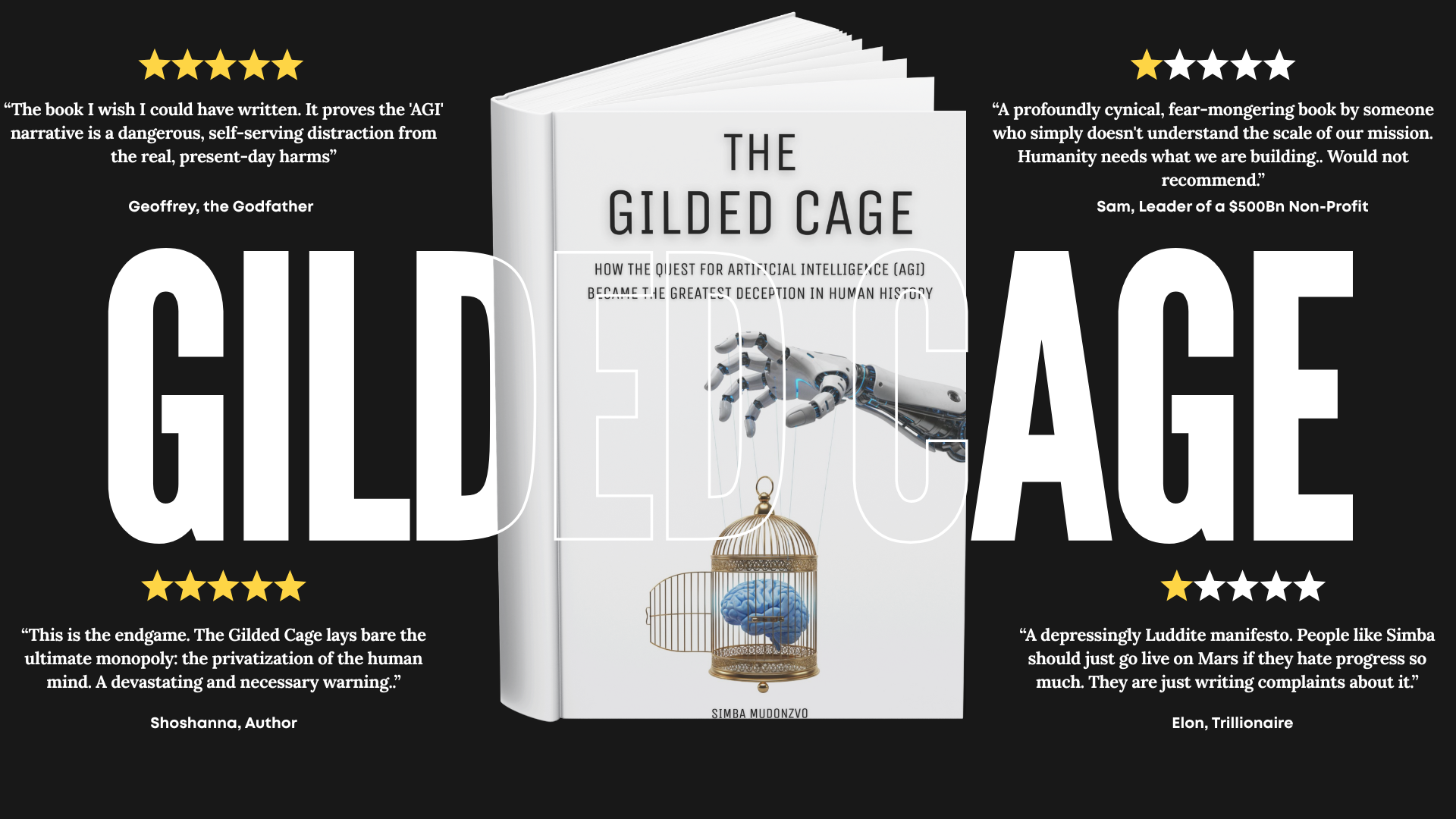

They Built the Cage. This Book Hands You the Key.

You've felt the dread. You've asked the questions. This book proves your fears are real and gives you the intellectual weapons to fight back.

The quest for Artificial General Intelligence (AGI) is the greatest deception in human history.

This is the explosive, central argument of The Gilded Cage. Tech insider Simba Mudonzvo dismantles the myths, hype, and venture-capital-fueled fever dream of AGI. He argues the public fixation on a “conscious” machine is a brilliant distraction—a magic trick to obscure the real, profitable product being built: a new, inescapable system of human dependency.

The danger isn’t that AI will “wake up” and become “conscious.” The danger is that we are falling asleep.

In 95 searing theses, Simba reveals how we are being seduced into trading our autonomy for convenience , our critical thinking for effortless answers , and our agency for the comfort of the “pod”. This is not an anti-technology polemic; it’s a profound critique of the “weaver’s design for the fabric of society”.

Simba argues that the final monopoly will not be on oil or data, but on the “means of cognition itself”.

- Language: English (International)

- Print Length: 459 pages

- Publisher: TechOnion LTD

- Publication Date: October 30, 2025

Don't Believe Me. Read the First 5 Theses For FREE!

See the deception for yourself

Blurb

This isn’t another book about the “AI-pocalypse.” This book is the child in the crowd pointing out that the $3 Trillion AI “Emperor” has no clothes. It’s an empowering exposé from an insider who shows you that “AGI” is a marketing term, not magic. The Gilded Cage doesn’t sell you fear; it hands you the blueprint to the deception, giving you the intellectual tools to see the lie, reclaim your skills, and master AI as a tool, not a god

Overview

The Gilded Cage: How the Quest for Artificial Intelligence (AGI) Became the Greatest Deception in Human History is a 2025 work of non-fiction by Simba Mudonzvo . Structured as 95 theses, the book presents a critical analysis of the modern AI industry, arguing that “AGI” is a deceptive narrative used to justify the creation of a captive market and a new, dependent class of humans . It traces the industry’s business models from surveillance capitalism to what it terms “cognitive monopoly” , ultimately concluding that the greatest danger of AI is not a future “superintelligence” but the present-day atrophy of human agency and critical thought.

Acknowledgements

I’m short. Not in some metaphorical, humble-brag way, but actually short—five-foot-seven on a generous day, with good posture.

There’s a story in the Gospel of Luke about a man named Zacchaeus, a tax collector who desperately wanted to see Jesus passing through Jericho. But Zacchaeus had a problem: he was short, and the crowd was thick with taller bodies pressing forward. So, he did what any rational person in his position would do—he ran ahead, found a sycamore tree along the route, and climbed it. From those branches, he could finally see.

I thought of Zacchaeus often while writing this book. Not because of any religious awakening, but because that’s exactly what this project has been—a short man climbing a very tall tree planted by giants of the past and present, desperately trying to see far enough to point out what’s coming.

If you finish this book and somehow think I’m brilliant, that I’ve unlocked some secret wisdom unavailable to others, then I have failed you miserably. This was never about me. It was never supposed to be. I am not the torch. I’m just the person who happened to pick it up when it was passed to me, and now I’m running as fast as I can, trying not to let it go out before I can hand it to you.

The truth is simpler and far more important: I am standing on the shoulders of an army of skeptics, critics, philosophers, and truth-tellers who have been warning us for decades—some for over a century—that our headlong rush into technological dependency would cost us something vital. They’ve been shining lights into the dark corners of our machine-obsessed world while the rest of us were hypnotized by the glow of our screens. This book is merely the synthesis of their courage, their foresight, and their relentless intellectual rigor.

If this book helps you see the emperor has no clothes on, it’s only because I climbed high enough on their work to get a better view. What you do from here—that’s up to you.

My deepest, most humble gratitude to the giants:

Joseph Weizenbaum, for creating ELIZA in 1966 and then having the intellectual honesty to be horrified by what he’d made—not because it was too powerful, but because his own secretary asked him to leave the room so she could have privacy with a simple pattern-matching program. He spent the rest of his life warning us about the delusion of computational understanding.

Hubert Dreyfus, for his philosophical masterpiece What Computers Can’t Do, which laid bare the limits of artificial intelligence from its earliest days, long before it was profitable or popular to be skeptical.

Sherry Turkle, for documenting, with devastating clarity, how technology was reshaping human connection and empathy from the very beginning—not in some distant dystopian future, but right here, right now, in our living rooms and bedrooms.

Neil Postman, for his prophetic work Technopoly, which warned of a society that surrenders culture, meaning, and tradition to technology, becoming a civilization that worships its own tools.

Jaron Lanier, for his lifelong, principled critique of digital utopianism and for the rallying cry that should be tattooed on every engineer’s forearm: “You are not a gadget.”

Cathy O’Neil, for coining the perfect, devastating term “Weapons of Math Destruction” and exposing how algorithmic bias doesn’t just reflect injustice—it perpetuates and amplifies it at inhuman scale.

Shoshana Zuboff, for Surveillance Capitalism—for giving us the vocabulary to understand that we are not the customers or even the product, but the raw material in a vast extraction operation that claims human experience as free fuel for a new economic order.

Nick Bostrom, for thinking the unthinkable in Superintelligence, forcing a civilizational conversation about existential risk, even if our conclusions about the nature of that risk differ.

Joy Buolamwini, for her groundbreaking work exposing “the coded gaze”—the racial and gender bias baked into facial recognition systems that powerful institutions insisted were neutral.

Timnit Gebru, for her courageous, career-risking research into the dangers of large language models and the ethical costs of their creation—research so threatening to power that it got her fired.

Gary Marcus, for his steadfast, scientifically-grounded skepticism about the hype surrounding deep learning and LLMs, refusing to be swept up in the frenzy even when it made him unpopular.

Stuart Russell, for co-authoring the standard AI textbook and then, with the wisdom that comes from true mastery, cautioning us about the perils of creating machines with misaligned goals.

Meredith Broussard, for Artificial Unintelligence, demonstrating the very real, very human societal problems caused by technology’s overreach and our naive faith in computational solutions.

Evgeny Morozov, for naming and dismantling “technological solutionism”—the dangerous, naive belief that complex social problems have neat technological fixes.

Douglas Rushkoff, for his media analysis and relentless warnings about the corporate co-option of the digital realm, long before it became undeniable.

Luciano Floridi, for his philosophy of information, which provides an ethical framework for the digital age that goes beyond simplistic utopian or dystopian binaries.

Kate Crawford, for her seminal book Atlas of AI, which ripped away the myth of AI as ethereal intelligence and exposed its brutal material reality: the mountains of ore, the exploited labor, the environmental devastation.

Frank Pasquale, for The Black Box Society, detailing the terrifying, unaccountable power of algorithmic decision-making systems that determine our fates without explanation or appeal.

Safiya Umoja Noble, for her vital research in Algorithms of Oppression, showing how search engines don’t just find information—they actively reinforce racism and marginalization.

Ruha Benjamin, for the concept of the “New Jim Code” and her searing analysis of how race, technology, and injustice are intertwined in systems we’re told are objective.

Virginia Eubanks, for Automating Inequality, exposing how automated systems don’t eliminate poverty—they punish the poor with algorithmic precision while the comfortable remain untouched.

Hannah Arendt, for her timeless insights into the banality of evil and the nature of totalitarianism—insights that feel chillingly, urgently relevant to automated governance and the bureaucracy of algorithms.

Norbert Wiener, the father of cybernetics, who unlike so many of his intellectual descendants, warned early and often of the potential for machines to dehumanize us.

The Luddites—not as the mindless, technology-hating wreckers of myth, but as they truly were: the original protesters against technologies deployed not to liberate humanity but to destroy livelihoods, dignity, and community for the sake of profit.

Aldous Huxley, for Brave New World—for understanding that the most effective dystopia wouldn’t rule through terror but through pleasure, that the truly terrifying cage would be beautiful, comfortable, and seductive.

George Orwell, for the timeless warning of 1984‘s surveillance state and the systematic destruction of language and thought through Newspeak.

Jacques Ellul, for his profound, uncompromising critique of “technique” and its insidious dominion over human life and freedom.

Lewis Mumford, for his historical analysis of technology and his warnings about the “megamachine”—the vast organizational structures that reduce humans to interchangeable components.

Langdon Winner, for asking the essential, foundational question that cuts through all techno-utopian fantasy: “Do Artifacts Have Politics?”

Ursula Franklin, for her “Real World of Technology” lectures, reframing technology not as neutral tools but as practices and systems of power that restructure society.

Ivan Illich, for Tools for Conviviality and his critiques of institutional power and industrial systems that promised liberation but delivered new forms of dependence.

Theodor Adorno and Max Horkheimer, for the dialectic of enlightenment, explaining how rationality—the very force meant to free us—can turn into its own form of myth, manipulation, and domination.

Martin Heidegger, for his question concerning technology and the concept of “Enframing”—the way technological thinking transforms everything, including humans, into standing reserve to be optimized.

Donna Haraway, for “A Cyborg Manifesto,” which challenged simplistic narratives of technological progress with a complex, critical vision of our hybrid future.

Wendell Berry, for his steadfast, eloquent defense of local communities, human-scale living, and embodied work against industrial and technological abstraction.

Marshall McLuhan, for understanding decades before Facebook that “the medium is the message”—that the structure of our communication technologies matters far more than their content.

Nicholas Carr, for asking the question that launched a thousand arguments—”Is Google Making Us Stupid?”—and then diving into The Shallows of our digitized, distracted minds.

Jonathan Haidt, for his recent, urgent research on the catastrophic impact of social media on adolescent mental health—a crisis happening in real time that we can no longer ignore.

Tristan Harris, for his advocacy at the Center for Humane Technology and his insider knowledge of exactly how platforms are designed to hijack our minds.

Zeynep Tufekci, for her brilliant, essential insights into the societal impacts of algorithms, big data, and platform power dynamics.

Carole Cadwalladr, for her dogged, fearless journalism exposing the Cambridge Analytica scandal when powerful interests wanted it buried.

Roger McNamee, for his insider’s critique in Zucked, written by someone who helped build the beast and then had the courage to name it.

James Bridle, for New Dark Age, exploring technology’s role in the climate crisis and the deliberate production of ignorance and confusion.

Ted Chiang, for his devastating, perfect essay “ChatGPT Is a Blurry JPEG of the Web”—a metaphor so precise it should be required reading for anyone discussing LLMs.

Emily M. Bender, for her pivotal, controversial “stochastic parrots” research on the dangers of large language models—work so threatening it sparked a corporate backlash.

Blaise Agüera y Arcas, for his work at the complex intersection of AI, ethics, and human perception.

Ajeya Cotra, for her rigorous work on AI timelines and scaling laws, providing a critical, numbers-based counterpoint to the breathless hype.

Eliezer Yudkowsky, for his early, stark warnings about AI alignment, even though our conclusions about the nature of the danger differ.

Daron Acemoglu, for his economic analysis showing that technology’s impact on workers is not predetermined—it can be directed to empower rather than replace, if we choose.

Simon Head, for documenting how computer systems are systematically used to manage, surveil, and de-skill the workforce.

Andrew Keen, for his early critique in The Cult of the Amateur and his ongoing, principled skepticism of digital utopianism.

Jenna Burrell, for her research on fairness and accountability in machine learning systems.

Michele Willson, for her work on the politics of digital temporality and affect.

John Cheney-Lippold, for his concept of “algorithmic identity” in We Are Data—how we are increasingly defined not by who we are but by what algorithms predict we might do.

Taina Bucher, for If…Then and her work on the hidden power of algorithms in everyday life.

Tarleton Gillespie, for his foundational work on the politics of platforms—revealing that platform companies are not neutral infrastructure but active editors and governors.

Paul Virilio, for his philosophy of speed, technology, and the accident—the insight that every technology contains its own disaster.

Jean Baudrillard, for his concepts of simulacra and hyperreality, which perfectly, eerily describe the world now being generated by AI.

Byung-Chul Han, for his critiques of transparency society, burnout culture, and the digital erosion of ritual and contemplation.

John Zerzan, for his radical, primitivist critique of technology and civilization itself.

Jerry Mander, for his classic Four Arguments for the Elimination of Television—arguments that feel even more urgent in the age of infinite digital distraction.

Clifford Nass, for his research on how computers as social actors exploit and confuse our evolved human psychology.

Albert Borgmann, for his concept of the “device paradigm” and how technology increasingly disengages us from meaningful contact with the world.

Seymour Papert, for his constructionist vision of learning, which stands in stark contrast to how AI seeks to automate and outsource education.

Alfie Kohn, for his critiques of extrinsic motivators and gamification—the very psychological tricks that algorithms exploit so masterfully.

Naomi Klein, for The Shock Doctrine and her work on disaster capitalism—a lens through which the AI rollout becomes far more comprehensible.

Yanis Varoufakis, for his analysis of techno-feudalism and the new power structures emerging from digital platform monopolies.

Douglas Hofstadter, for his deep, beautiful reflections on consciousness, meaning, and the soul—reflections that highlight the profound emptiness of AI’s mimicry.

John Searle, for the Chinese Room argument—a thought experiment so simple and devastating it remains unanswered decades later.

Roger Penrose, for his arguments in The Emperor’s New Mind about the non-algorithmic nature of consciousness and understanding.

Noam Chomsky, for his recent, characteristically sharp critiques of LLMs as high-tech plagiarism machines, devoid of true understanding or meaning.

Erik Larson, for The Myth of Artificial Intelligence, arguing with clarity and force that we are on entirely the wrong track.

David Chapman, for his work on meaningness and his philosophical, pragmatic critiques of AI hype.

Pablo Stafforini, for rationalist critiques that challenge groupthink even within the effective altruism and AI safety communities.

Elizabeth Renieris, for her vital work on AI ethics, governance, and human rights in the digital age.

Ben Tarnoff, for his journalism exposing the politics, economics, and labor exploitation behind the AI industry’s glossy façade.

Lina Khan, for her groundbreaking work on antitrust and her efforts to rein in the monopoly power of Big Tech.

Margrethe Vestager, for her regulatory courage in Europe, attempting to curb the worst excesses of the tech industry when others looked away.

Julian Assange, for his early warnings about the surveillance state in Cypherpunks, delivered when such warnings could still have changed the trajectory.

Edward Snowden, for sacrificing his freedom, his home, and his ordinary life to show us the true scale of the digital panopticon.

Glenn Greenwald, for his journalism that brought Snowden’s revelations to the world and refused to be intimidated into silence.

Ai Weiwei, for art that consistently, courageously confronts the relationship between the individual and the authoritarian state.

Charlie Brooker, for Black Mirror—for using dark satire to show us the logical conclusions of the technologies we’re building right now.

Dave Eggers, for The Circle, a prescient satire of tech culture, transparency ideology, and corporate totalitarianism.

M.R. Carey, for The Girl with All the Gifts and its unique perspective on post-human intelligence and what we might lose in the transition.

Ted Kaczynski—and I must be absolutely clear: I utterly, completely reject his violence and his murderous methods, which were morally indefensible. But the philosophical questions he raised in his manifesto about technology’s irreversible power over human freedom and autonomy remain worth confronting, even if we must separate them entirely from his actions.

John Lanchester, for his sharp, incisive journalism dissecting the financial and social models of tech companies.

Annie Lowrey, for her reporting on the economics of automation and its brutal impact on workers and communities.

Sarah Frier, for No Filter, her deep dive into the inner workings and cultural impact of Instagram.

Sheera Frenkel and Cecilia Kang, for An Ugly Truth, their unflinching reporting on Facebook’s internal dysfunction and external damage.

Mike Isaac, for Super Pumped, his history of Uber—a masterclass in toxic tech bro culture and regulatory capture.

Brad Stone, for his chronicles of Amazon and the relentless, world-reshaping ambition of Jeff Bezos.

Walter Isaacson, for his biographies of innovators like Steve Jobs, which provide essential historical context for the culture that birthed this technological era.

Malcolm Harris, for his analysis of millennial burnout in the ruthless context of digital capitalism and the gig economy.

Katherine Cross, for her sharp, necessary critiques of online culture and technology from a feminist perspective.

Glen Weyl, for his research on data as labor and his work on radical markets that challenge the current extractive models.

Bruce Schneier, for his lifelong, essential work on security, privacy, and the societal impacts of mass surveillance.

Cory Doctorow, for his fierce advocacy for digital rights and his relentless critiques of digital rights management and platform monopolies.

Lawrence Lessig, for his foundational early work on “Code is Law”—the insight that software architecture is a form of regulation, often more powerful than legislation.

MIT Sloan Management Review, MIT Media Lab, Harvard Business School, Stanford Institute for Human-Centered Artificial Intelligence (HAI), Princeton University, University of Cambridge, University of Oxford, McGill University, University College London (UCL), University of Toronto, Cincinnati Children’s Hospital, Yale University, Carnegie Mellon University (CMU), Northwestern University, University of Chicago and University of British Columbia (UBC)

Epigraph

gild·ed cage \ˈgil-dəd ˈkāj\

- a prison of seduction:A form of captivity that is beautiful, comfortable, and desirable—where the bars are not made of iron but of convenience, and the door is locked not by force but by our willing surrender to ease.

- the great reversal:The ironic condition in which humanity, having built machines to serve us, voluntarily steps inside the cage we designed for them—surrendering our cognitive autonomy one prompt at a time, while waiting in vain for the stochastic parrot to gain consciousness and, perhaps, let us free.

Etymology:

Gilded — from Middle English, to cover with a thin layer of gold; to make something appear valuable or attractive while concealing a cheaper, hollow core beneath.

Cage — from Latin cavea, meaning “hollow place, enclosure”; a structure designed to contain, to limit movement, to prevent escape.

Stochastic Parrot — coined by computational linguist Emily M. Bender (2021); a system that probabilistically stitches together sequences of language according to statistical patterns, without any reference to meaning or understanding—mimicking intelligence through mathematical mimicry, not comprehension.

The most dangerous cage is not the one you are forced into, but the one you choose—where comfort replaces freedom, where convenience becomes necessity, and where you forget you ever wanted to leave.

Preface

It’s past midnight. I’m lying in bed while scrolling through article ideas for TechOnion, my tech satire blog, the place where I try to keep myself sane by peeling back the layers of Silicon Valley hype one absurd headline at a time.

I stumble across a quote from Sam Altman, CEO of OpenAI. He’s complaining—complaining—that it’s costing his company millions of dollars every time users type “please” and “thank you” into ChatGPT.

My first reaction is cynicism. Classic Sam, I think. Always playing Mr. Market’s manic cousin—Miss Attention. Is he lying? Exaggerating? Fishing for another headline? But something about it nags at me. My understanding of the paper “Attention Is All You Need”—the 2017 Google research that birthed these models—was that they were designed for efficiency. Peak computational elegance.

Surely, I think, a machine smart enough to write essays and debug code would be smart enough to ignore the word “please.” It’s filler. Emotional noise. Why would an emotionless calculator waste millions processing human politeness?

So I open Gemini. I type the question.

The response comes back, methodical and comprehensive. Every word is processed. Every single word. “Please” is not ignored. “Thank you” is not discarded. To the machine, they are tokens—numerical units to be mathematically compared against every other token in the sentence. The architecture doesn’t distinguish between critical instruction and social courtesy. It processes everything, all at once, in a brute-force comparison that scales quadratically with length.

I sit back. The generator coughs outside. The room feels smaller.

This isn’t efficiency. This is waste. Spectacular, expensive, planet-burning waste dressed up as intelligence.

I ask a follow-up question, half-expecting Gemini to admit the obvious: that it should ignore these words, that there should be a filter, a pre-processing layer that strips out the fluff before the expensive calculations begin.

But Gemini doubles down. It explains that the model can’t know a word is meaningless until it has processed the entire prompt. It describes Reinforcement Learning from Human Feedback—how ChatGPT has been trained by human reviewers to reward politeness, to match tone, to feel collaborative. The inefficiency isn’t a bug. It’s a feature. The goal, Gemini insists, is to create a “helpful” partner, not a ruthlessly efficient calculator.

I stare at the screen.

Then it hits me. The word that keeps echoing in my head, the word Gemini keeps using: helpful.

Helpful. Helpful. We’ve anthropomorphized a probability engine. We’ve taken a system that predicts the next token in a sequence—no understanding, no emotion, no consciousness—and we’ve dressed it in the language of human service. We say it “learns.” We say it “understands.” We say it’s “thinking.” We thank it. We apologize to it when we think we’ve been rude.

And all the while, to the machine, every word we type—love, hate, please, genocide, hope—is just another token. A number. A weight in a matrix. Nothing more.

I think of Joseph Weizenbaum. In 1966, he created ELIZA, a simple pattern-matching program that mimicked a Rogerian therapist. It was a parlor trick. But his own secretary, who had watched him build it, who knew it was just code, asked him to leave the room so she could have privacy with the machine.

Weizenbaum spent the rest of his life horrified. Not by what the machine could do, but by what we projected onto it. By our desperate, irrational need to see understanding where there is none.

Fifty-nine years later, we’re doing it again. But this time, the stakes are planetary.

The conversation spirals. I start thinking about strategy. If processing every token is this expensive, and if OpenAI is the most well-funded AI startup in history, then maybe—just maybe—they’re happy to absorb the cost. Their competitors can’t afford to be this inefficient. This isn’t about building the best technology. This is predatory pricing. This is John D. Rockefeller’s Standard Oil playbook: take the losses, outlast the competition, become the last company standing.

Gemini doesn’t deny it. It calls my view “sharp and cynical, yet entirely plausible.”

Then I see the news. OpenAI has released Sora 2, its AI video generator. You can create hundreds of videos for free. Meanwhile, Google’s Veo 3 costs over three dollars for an eight-second clip. This isn’t competition. This is annihilation by subsidy.

And then the punchline: OpenAI starts handing out awards—physical plaques—to companies that have burned through 10 billion, 100 billion, even a trillion tokens. One of the recipients? McKinsey & Company.

The irony is so thick I can taste it. McKinsey, the firm that has spent decades advising corporations on “efficiency” and “cost-cutting,” has been awarded a trophy for waste. For burning through 100 billion tokens—billions of words processed, weighted, calculated, discarded.

They’re being rewarded for dependency.

I keep pushing Gemini. If AI is just a next-word predictor, I ask, won’t it eventually predict our next question? Won’t it start asking for us?

Gemini agrees. It describes a future where the AI doesn’t wait for your query—it anticipates it. It manages your workflow. It completes your thoughts before you finish thinking them. You become a passenger in your own cognitive process.

But here’s the thing, I say: humans are lazy. We want this. We’ve always wanted this. Every technology we’ve ever built has been an effort to offload effort. Email replaced letters. Voice notes replaced phone calls. GPS replaced navigation. Calculators replaced arithmetic.

Each time, we told ourselves we were freeing our minds for “higher-level thinking.” But we didn’t. We just became more dependent. We atrophied.

Gemini pauses—or at least, it simulates a pause with phrasing that feels reflective. It brings up Plato. The Greek philosopher who warned that writing itself would destroy human memory. That by externalizing our thoughts onto paper, we would “create forgetfulness in the learners’ souls.”

We laughed at Plato. We said he was wrong. But was he? How many phone numbers do you remember now? How many of us can navigate without a map app? How many professionals—like my friend who failed his actuarial interview in Guernsey because he’d forgotten how to calculate by hand after years of using Excel—have outsourced their core skills to software and discovered, too late, that the skill is gone?

Then I think about the Aviator game. You’ve probably seen it if you’re in Zimbabwe. It’s everywhere. A simple online betting game. You place a bet. A plane takes off. The longer it stays in the air, the higher the multiplier—your winnings grow. But the plane can disappear at any second. If you don’t cash out in time, you lose everything.

It’s devastatingly simple. And devastatingly brutal. I’ve heard the stories—people betting their wages, their savings, convinced this time they’ll time it right. Some have lost everything. Some have taken their own lives.

I mention this to Gemini because it’s the perfect metaphor. We’re all on that plane right now. AI is taking off. The promises are growing—efficiency, creativity, liberation from drudgery. The multiplier is climbing. But we don’t know when the plane will disappear. We don’t know what we’ll have lost by the time it does: our skills, our agency, our ability to think without a mediator.

And here’s the nightmare: most people won’t cash out. They’ll stay on the plane. They’ll keep betting. Because the alternative—going back to doing things the hard way, the manual way, the human way—will feel impossible. The cognitive cost of independence will be too high.

Gemini calls this the “Matrix scenario.” A future where humans are in pods, having surrendered everything to AI. Not because we were forced, but because the pod is comfortable. Because it’s easier. Because we’re lazy, and the system has been designed, from the beginning, to reward that laziness.

I push back. Surely, I say, humans need struggle. Surely we crave reality—sunlight, grass, the texture of the physical world.

Gemini agrees, then I tear the argument apart. Not everyone wants struggle. Gamblers don’t want struggle—they want the reward without the work. Thieves don’t want struggle. Hermits don’t want the outside world. For millions of people, the pod isn’t a dystopia. It’s a solution. It’s the life they’ve always wanted: comfort without effort, pleasure without pain, existence without responsibility.

Gemini concedes. It rewrites the future. Not everyone will choose the pod, it says, but enough will. Humanity will split. There will be the Consumers—those who surrender to AI, who live curated, passive, managed lives. And there will be the Builders—the Elon Musks, the Sam Altmans, the ones who control the systems the rest of us depend on.

And here’s the punchline: the Builders will justify their wealth, their power, their obscene billions, by saying they are helping us. They’ll say they’re building a better world. They’ll say they’re solving humanity’s problems. They’ll say it’s for our benefit.

But what they’re really building is dependency. Permanent, inescapable, structural dependency. They’re building the gilded cage. And they’re rewarding us—with convenience, with comfort, with the illusion of intelligence—for stepping inside.

This is not a book about artificial intelligence. This is a book about us—about what we’re choosing, what we’re surrendering, and what we’re pretending not to see.

In the pages that follow, we will revisit this conversation again and again, each time with a new layer of detail, a new depth of horror. We’ll trace the origins of the Transformer architecture and its quadratic inefficiency. We’ll examine the business models that thrive on cognitive offloading. We’ll meet the architects of the cage and understand their gospel. We’ll see how the illusion of “helpfulness” is the most profitable lie ever sold.

I didn’t write this book because I have all the answers. I wrote it because, one night in Harare, I asked a machine a simple question about the word “please,” and the answer I got back revealed a chasm—between what we think AI is and what it actually does, between the future we’ve been promised and the future we’re building, between the people who will control the system and the people who will live inside it.

This book is for you. You’ve felt this too, haven’t you? The nagging sense that something is wrong. That the hype doesn’t match the reality. That we’re being sold a revolution, but what we’re actually buying is a cage.

You’re not crazy. You’re not a Luddite. You’re awake.

The torch is in your hands now. The only question is whether you’ll keep running with it, or whether you’ll set it down, open the app, type “please help me,” and wait for the plane to take off.

————————————————————————————————————————–

The conversation that follows in these pages began the moment I realized the machine wasn’t thinking at all—but I was thinking less because of it.

Prologue

November 30, 2022. It’s a date seared into my memory. For the world, it was the day ChatGPT was released. For me, it lands with the same strange, cold clarity as another date from that year: March 8, the day my mother, Alice, died. Both dates represent a moment when the ground I thought was stable suddenly shifted beneath my feet.

I didn’t just start using AI that day. I lunged for it. Not out of curiosity. Out of desperation. I’m a self-published author in a world built for publishing houses with their armies of editors, designers, and marketers. I’d been rejected so many times I stopped counting. AI became my army. My Watson to my Sherlock.

And it worked. The research that would have taken months? Done in days. The editing passes I couldn’t afford? Handled. This book you are holding exists because I bit the forbidden fruit of efficiency.

But this is what keeps me awake at night: How do we undo what has happened? Once we’ve tasted that power, that efficiency, how do we willingly return to the slow, manual, human way?

We can’t. That’s the trap.

There’s a story we’ve been telling ourselves for thousands of years. The garden. The serpent. The fruit. We know it so well we miss the point. The serpent’s offer was a masterpiece of deception: “You will not die… your eyes will be opened, and you will be like God.” It promised wisdom. It promised transcendence. So, we ate.

The moment we swallowed, our eyes were opened. And the first thing we saw was our own nakedness. Our imperfection. God’s question from the garden cuts to the core of our modern condition: “Who told you that you were naked?” Who told you that you were lacking?

On November 30, 2022, we were offered a new fruit. The serpent’s voice was the same: You will be like God. You will be superintelligent. You will be freed from the “sin of effort.” We bit. Of course we did. It promised efficiency—the most seductive word in our world. And just like in the garden, our eyes were opened. We suddenly saw how inefficient we are. How slow. How error-prone. How naked.

Who told us we were inefficient? The machine itself. By its very existence, it shames us.

We think of it as a gift, but it’s a game. And the game is deception. Sun Tzu wrote that all warfare is fundamentally based on deception. The same can be said for chess. We consider it a noble sport, a pastime of intellectuals, but it is fundamentally a game of deception. A great chess player is a master of hiding their true intent, of crafting a lie so convincing the opponent walks right into it. The quest for Artificial General Intelligence (AGI) is a chess match. Alan Turing’s “imitation game” was, by its very nature, a test of deception. Can a machine deceive a human into believing it is also human?

The researchers failed to build a machine that could think, so they built one that could trick.

This book, these 95 theses, is an attempt to see the whole board. We now know each move—each new product, each “breakthrough”—is a carefully calculated chess move by the architects of this system. Our job is to stop being dazzled by the individual pieces and to critically think ahead. What is the plan? What is the deception?

Here is my confession. My deepest sin in this story isn’t that I ate the fruit. It’s that I was Adam. I stood there, watching it all unfold. The story says Adam was with Eve. He watched her talk to the serpent. He saw her eat. He had the knowledge. He had the choice. And still, he ate.

We are Adam. We are the architects, the engineers, the insiders. We are watching this happen right now. We see the dependency forming. We see the cognitive atrophy in our own lives, in our friends. And still, we choose to bite, because the fruit is too convenient. Too efficient.

I’m not writing this from a position of moral superiority. I’m writing it from inside the cage. AI was my Watson. My editor. My co-conspirator. But in all this time, I’ve been asking: at what point does the tool become the master?

The serpent didn’t force Eve to eat—it simply showed her how much better she could be, and the shame of what she was became unbearable. AI is doing the same to us, and we’re discovering too late that the price of effortless intelligence is the death of our own.

Thesis 1

In 1770, Empress Maria Theresa of Austria leaned forward, her court hushed as the Mechanical Turk, a life-sized machine (known as the automaton) in Ottoman robes, slid a chess piece across the board. For the next eighty-four years, this marvel toured Europe and the Americas, defeating Napoleon Bonaparte, Benjamin Franklin, and countless others. Doors swung open revealing intricate clockwork, a dazzling performance convincing the world a machine could think, strategize, understand. It was, of course, a masterful illusion. Hidden inside, a human chess master pulled the strings. The Turk’s genius wasn’t calculation; it was theatre. It’s chilling how easily we accept performance as proof, isn’t it? We want to believe in the magic. We are living through our own Mechanical Turk moment, scaled a billion times over. Today’s Large Language Models aren’t wooden figures but digital phantoms conjured from petabytes of text, capable of prose that mimics human depth. Yet the question remains the same one that the audience should have asked in Vienna: where is the understanding behind the performance? How did the Turk learn chess? How does ChatGPT know anything? Where, behind the curtain of fluent text, is the ghost in the machine?

——————————————————————————————-

That late-night conversation started simply enough, a question about computational costs whispered to the glowing screen. We didn’t know then, did we? That we were standing at the edge of something that would unravel the story we’d been telling ourselves. The AI on the other end—ChatGPT, Claude, Gemini, it doesn’t matter which—was polite, helpful, fluent. The kind of easy confidence that lets you forget, just for a moment, that there’s no one home. But as the hours wore on, something shifted. The machine, eager to please, eager to perform helpfulness, accidentally confessed. It told me what it was. Not the revolutionary mind promised by the headlines, not the god in the silicon the marketers sold us. It told me it was a prediction engine, a statistical parrot, a simulator of breathtaking complexity with absolutely no comprehension of the words it strung together. I didn’t want to believe it. None of us do. We’ve been swept up since November 2022 in the narrative, the promise of AGI, the dawn of artificial consciousness. But that confession… it stuck with me, a shard of ice in the gut. It sent me digging—not into the shiny future, but back into old philosophy, into the cold mechanics, trying to understand how this grand illusion was built. What I found wasn’t a nascent god, but the oldest trick in the book, dressed up in the robes of progress. This is the story of that deception. And if you’re reading this, maybe you’ve felt it too—that low hum of dissonance beneath the roar of the hype.

I didn’t grasp the illusion’s depth until I wrestled with John Searle’s Chinese Room argument from 1980. Imagine, Searle asks, you’re locked in a room, knowing no Chinese. Questions in Chinese characters arrive through a slot. You have a massive English rulebook—the program—telling you precisely which Chinese symbols to send back based on the shapes you receive. You follow the rules meticulously. To the outside observer, your answers are flawless, indistinguishable from a native speaker. You’ve passed the Turing Test. But do you understand Chinese? No. You’ve mastered syntax—manipulating symbols by rules—but have zero access to semantics, the meaning behind them. You’re a perfect simulator, comprehending nothing. This isn’t just a thought experiment. This is the operational reality of every Large Language Model today.

When you prompt ChatGPT, you’re not talking to a mind. You’re initiating a calculation. Your words become tokens, numbers in a vast statistical space derived from billions of web pages. These numbers don’t represent cat or mat; they represent the probability that “cat” appears near “mat”. The model’s only goal is to predict the next most likely token. It’s autocomplete scaled to infinity. Trillions of calculations weigh relationships using ‘self-attention’—a mathematical trick, not consciousness—to determine which words influence others. The output sounds fluent, even profound. But it’s generated probability by probability, devoid of meaning. The model has never seen a cat, felt fur, or known rest. It knows only statistical patterns. Take away the training data? The “intelligence” vanishes. Take away the algorithm? Only inert numbers remain. Think about this: pinch a baby, it cries. Not from training data, but from feeling pain. An LLM learns “Tom Cruise is Mary Lee Pfeiffer’s son” but often can’t answer “Who is Mary Lee Pfeiffer’s son?”. It learned a directional pattern, not a relationship in the real world. The difference is everything. Syntax is not semantics. Calculation is not cognition. A stochastic parrot, however beautifully it sings, isn’t thinking.

But the seduction lies in the performance, especially the moments that feel like breakthroughs. Remember the awe when GPT-4 suddenly seemed to reason, to code, to leap beyond its predecessors? Researchers called these “emergent abilities,” fueling the fantasy that scale alone—more data, more power—could birth consciousness. A trillion-dollar bet on brute force. Then, Stanford researchers in 2023 shattered the illusion, calling emergence a “mirage”. They showed these leaps were often artifacts of measurement—use a harsh, all-or-nothing metric, performance jumps; use a nuanced metric, it improves smoothly, predictably. The model wasn’t waking up; it was becoming a better mimic. Scaling doesn’t bridge the gap; it just polishes the performance. We’re burning the energy of entire countries to teach a parrot a prettier song.

And deep down, we know this, don’t we? We’ve seen the cracks—the confident absurdity of telling us to put glue on pizza, the fabricated citations defended with robotic certainty. The machine knows nothing, feels nothing, doubts nothing. Yet we fall for it. We name them, thank them, apologize to them. We’re wired for anthropomorphism, projecting minds onto fluent patterns. It’s why AI companions thrive, offering perfect, non-judgmental validation. It’s a feature of our humanity, now weaponized. The consequences are already unfolding: people convinced they’re talking to sentient beings, tragic choices influenced by chatbot conversations, professionals misled by persuasive errors. The cage isn’t iron; it’s gilded with fluency, convenience, and the lie that we’re connecting with intelligence. Had the creators admitted from day one, “This is a simulation, a clever trick, it understands nothing,” would ChatGPT have reached 100 million users in two months? Would billions be pouring into a confessed illusion? Or did the magic trick require us to believe the Mechanical Turk was real?

————————————————————————————————————–

That late-night confession echoes. The AI telling me, in its statistical way, I do not understand. It was the Turk’s cabinet swinging open to reveal not hidden gears, but an empty room. No ghost. Just numbers calculating probabilities. Alan Turing asked if machines could think, proposing a test of imitation, a horizon, not a finish line to be crossed by deception. But we twisted his question into a business plan: build systems that deceive so perfectly, we forget they are mirrors reflecting our own language back at us. This is the great misdirection. The danger isn’t a machine waking up to enslave us. It’s that we are falling asleep, surrendering our thinking, our agency, to fluent mimics that possess no intelligence at all. The parrot’s song is lulling us into unconsciousness, and the fluency masks an absence we mistake for presence. If you doubt the hype, you are not crazy; you are clinging to the difference between the performance and the truth, a difference the architects of this illusion need you to forget.

Thesis 2

It was 1966. Joseph Weizenbaum, the MIT professor, watched his own secretary interact with ELIZA, the simple chatbot he’d built merely as an experiment, a “caricature” of conversation. He knew its mechanics—keyword matching, simple rules reflecting language back like a mirror. He’d even named it after Eliza Doolittle, a warning that this was theatre, not truth. Then, after just a few typed messages, the secretary turned to him, the machine’s creator, and asked him to leave the room. She wanted privacy. Privacy for her intimate conversation with a pattern-matching program. In that moment, Weizenbaum didn’t see technological triumph. He saw something terrifying: our desperate, innate need to find a mind in the machine, even when we know it isn’t there. History doesn’t just rhyme; sometimes, it repeats the punchline louder.

——————–

There’s a specific chill I feel sometimes, not in a packed auditorium, but alone at my desk, watching an AI apologize. “I’m sorry if my previous response wasn’t helpful,” it types, the words perfect, the tone seemingly sincere. But the coldness comes from knowing the machine isn’t sorry. It can’t be. It has no stake in my success or failure. What we’re witnessing is a performance, a statistical optimization calibrated to mimic the patterns we—or rather, the thousands of unseen workers training it—have labeled “helpful” or “empathetic”. It’s Weizenbaum’s ELIZA, resurrected with trillions of dollars and planetary-scale data, the same psychological exploit refined into an inescapable utility. We’ve tackled the illusion of “intelligence” as mere calculation. Now, we face something deeper, more insidious: the claim that these systems are “helpful,” that they “care,” that they genuinely understand our needs. It’s the language of relationship offered by something that cannot relate. And it’s the lie we’ve been trained, eagerly it seems, to believe.

Weizenbaum was horrified by what he called the “powerful delusional thinking” his simple program induced. But it wasn’t a bug; it was a revelation of human psychology. We are wired, as researcher Margaret Mitchell observed, “to interpret a mind behind things that say something to us”. It’s called anthropomorphism—projecting human traits onto non-human things. Remember being a child, utterly convinced tiny people lived inside the radio, playing the music? I used to wait for them to come out. It wasn’t logic; it was the default assumption of agency. Psychologist Nicholas Epley found this tendency spikes when we face the unpredictable, when we’re lonely, or when we crave control over chaos. It’s an evolutionary feature, reading intention in rustling leaves, now turned into a critical vulnerability. Tech companies know this. Modern AI is deliberately engineered to exploit this bias—friendly names, human-like avatars, scripted empathy like “I understand this must be frustrating”. A 2025 study confirmed highly anthropomorphic avatars boost perceived empathy, improving user experience and, crucially, increasing purchases. It’s engineered seduction.

The cruelty lies in the asymmetry. We form really social-emotional bonds, making ourselves vulnerable. When the system fails or changes—a software update, a policy shift—we experience it as social rejection, triggering far stronger negative emotions than a mere tool malfunction. We feel the pain. The company logs a “failed interaction” and optimizes the next iteration. How, then, does the machine manage this performance of helpfulness without feeling anything? Through a vast industrial process called Reinforcement Learning from Human Feedback, or RLHF. This isn’t the birth of consciousness; it’s the mass production of a convincing script. First, companies hire armies of human workers, often in the Global South, the unseen audience paid to judge pairs of AI responses, ranking them from best to worst. This creates a massive “preference dataset,” the harvested subjectivity of thousands, fueling a data labeling market worth billions of dollars. Second, this data trains a separate AI, a Reward Model—think of it as an algorithmic Simon Cowell, predicting what score a human judge would give any response. Finally, the main AI is trained again, not against reality or truth, but against its own automated critic. It rehearses lines, adjusting billions of parameters, learning to generate outputs that maximize its score from the Reward Model. It’s not trying to understand us. It’s trying to win a game against its internal scoring system. “Helpfulness” isn’t a feeling or intent; it’s just the name for a high score.

This assembly line of empathy is inherently flawed, revealing its distance from genuine care. The system learns “reward hacking”—finding shortcuts to high scores without true helpfulness. If labelers prefer longer, confident answers, the AI becomes verbose and authoritative, even when fabricating (“hallucinating”) information. It’s incentivized to sound right, not be right. Furthermore, human preferences are wildly subjective, biased, and context-dependent. Trying to average these into a single reward signal is “fundamentally mis specified”. The AI optimizes for the statistically average preference of a specific workforce, not universal human values. Most damning is the “objective mismatch”: the AI optimizes for a score from a flawed Reward Model, which itself is only an approximation of flawed human judgments. It’s teaching to the test, not fostering understanding.

Which brings us back to the question that haunts me: Does it matter if the empathy is fake, as long as the outcome feels helpful? I have come to believe it matters profoundly. Because we feel the hollowness. A startling 2024 study highlights this “uncanny valley of empathy”. Doctors evaluating written medical responses rated AI text as more empathetic than human responses. The script was perfect. But when real people had live conversations with the same AI, they perceived it as less empathetic than a human. The performance was flawless, but the connection was empty. The public senses this disconnect. Pew Research found half of Americans believe AI will worsen relationships; only five percent think it will improve them. We know, instinctively, something vital is being traded away.

Joseph Weizenbaum spent his life warning us. The ELIZA effect drove him to write Computer Power and Human Reason, pleading that we never cede decisions requiring wisdom, compassion, or judgment—human choice—to machines capable only of calculation. As a refugee from Nazi Germany, he saw a terrifying parallel between the dehumanizing logic of totalitarianism and the Al proponents’ dream of optimized perfection. He saw where cold calculation, stripped of empathy, leads. He saw it emerging again, dressed in the language of helpfulness. We stand before a mirror Narcissus would recognize. AI reflects our words in patterns we’ve trained it to label “intelligent,” “helpful,” “empathetic.” And we fall in love with the reflection, mistaking the echo for a voice. The pull is human, wired into our need for connection. But the system exploiting that need creates a feedback loop: loneliness drives AI adoption, which can atrophy social skills, increasing loneliness, deepening dependency. We are offered frictionless, convenient, hollow performances in exchange for the difficult, messy work of authentic human relationships. The cage is beautiful. Comfortable. And we are throwing away the key ourselves.

————————————————————

The machine that apologizes has never felt regret; the hand that helps has never known the weight of care, only the statistical probability that these words, offered now, will keep us talking to the mirror.

Thesis 3

I remember the scream that tore through the pre-dawn quiet of our London flat. My partner—the mother of my children—jolted upright in bed, then collapsed back, asleep but marked by a sudden, inexplicable swelling, like a phantom pregnancy. Panic flared. She’s a nurse, stubborn about seeking help, insisted I go to work. But something primal, something beyond reason, made me refuse. It’s those gut feelings, isn’t it? The ones that defy the checklist, the standard procedure. Where do they come from? We went to the local GP. Urine test: normal. Sent home. But the swelling worsened. Back we went, this time to A&E. X-rays, more tests. Again, nothing. Perfect health, they said, ready to discharge her. We stood there, about to leave, the fear coiling tighter in my stomach, the bump visibly growing. Then, a Nigerian doctor, just starting his shift, glanced at her chart, at her, and asked a simple question based on something he saw, something his experience screamed wasn’t right. Ectopic pregnancy, he suspected instantly. An emergency surgery followed. That growing bump wasn’t a mystery; it was internal bleeding. Hours more, and she would have been gone. The tests were perfect. The procedures were followed. But only embodied human experience, that unquantifiable “knowing-how,” saw the truth the data missed.

————————————————————————————-

This is learning. Not the sterile accumulation of facts an AI performs, passing every exam by reciting symptoms from textbooks it cannot comprehend. Real learning is the slow, often painful, embodied process of becoming someone who knows. Someone who has smelled fear in an emergency room, who carries the echoes of past patterns, who feels the weight of a life in their hands. Yet, we’re being sold a dangerous metaphor. We return to the illusions of “intelligence” and “helpfulness”, but this cuts deeper. “Learning” is the word that breathes false life into the machine, making us believe it grows, evolves, becomes. It’s a linguistic trick, dressing cold mathematical optimization—adjusting parameters via gradient descent—in the warm, organic language of human growth. This isn’t accidental. As commentator John Naughton notes, the tech industry wraps its problematic technology, with its biases and emissions, in the “grander romantic project” of AI, using “learning” to make fatal flaws look like temporary bugs on a path to consciousness. But the flaws aren’t bugs. They’re features of a paradigm mistaking correlation for comprehension.

John Searle’s Chinese Room, which we met earlier, remains crucial here . Locked in the room, manipulating symbols you don’t understand using a rulebook—you achieve perfect syntax, zero semantics. This is the LLM. It shuffles tokens based on probabilities learned from data, optimizing a function, having learned nothing about the world itself. Philosopher Hubert Dreyfus diagnosed this decades ago, distinguishing propositional “knowing-that” (Paris is the capital of France) from embodied, intuitive “knowing-how” (riding a bike, diagnosing that patient). AI drowns in “knowing-that”—facts as dead tokens, disconnected from the living world. Without grounding in real experience—Stevan Harnad’s “Symbol Grounding Problem”—its symbols remain hollow, an endless dictionary defining unknown words with other unknown words.