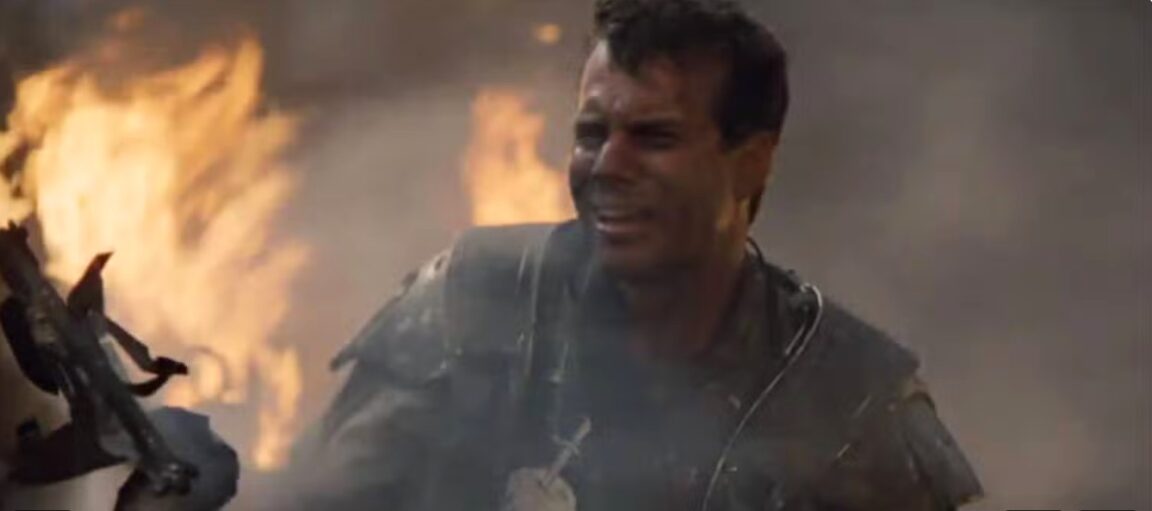

“That’s it, man. Game over, man. Game over!”

— Private Hudson, Aliens, 1986

Table of Contents

The lights are flickering.

Not the romantic flicker of a candle — the kind that makes a dinner table feel intimate and warm. The violent, industrial flicker of overhead strip lighting that has taken a massive hit. Corporate fluorescent tubes in a corporate operations room, strobing in and out like the nervous system of a building that knows something is very wrong.

Private Hudson is on his feet.

He is not standing the way soldiers stand — upright, composed, the posture of a man in control of his situation. He is standing the way a man stands when the floor has disappeared from underneath him and his legs haven’t yet received the message. His hands are shaking. His rifle is somewhere. His voice — the voice of a trained marine, a man who signed up for danger, a man who accepted risk as the terms and conditions of his employment, who trained for years for exactly this kind of mission — has cracked. Completely. Irreversibly.

Sweat is running down his forehead. Real sweat. Not the polite perspiration of a man who has been having a nice jog in Hyde Park on a cool Sunday. It’s the cold, sudden, involuntary sweat of a man whose brain has just processed a threat and whose body has responded before his mouth found the words. His eyes are wide. Not with aggression. With something far worse than aggression.

With understanding. Deep understanding.

The specific, terrible, crystalline understanding of a man who has just grasped something he cannot un-grasp. A man who came into this room with every advantage his species could provide him — weapons, training, armour, a plan, colleagues, hardware, communication systems, years of accumulated expertise in exactly this kind of environment — and has just discovered, in real time, that none of it is sufficient. Not because the enemy is bigger. Not because the enemy has better weapons. But because the enemy thinks differently. Operates at a different level. Does not share a single assumption with the men and women in that room about how a fight is supposed to work.

“That’s it, man!”

He says it the way a man says something when he needs to hear it out loud to believe it. When the thought in his head is so enormous that saying it is the only way to confirm it is real.

“Game over, man!”

The second sentence comes faster. Lower. Less a declaration than a verdict. The moment when the appeal has been heard and rejected and the judgement is final.

“GAME OVER!”

Around him: a commanding structure that has just been decapitated. Colleagues in various stages of their own psychological collapse. A plan that was excellent — genuinely, professionally excellent, built on experience, intelligence, and every lesson the species had learned — revealed, in the space of minutes, as a plan designed for a world that no longer exists.

They came in armed.

They are leaving — those who leave — having understood that the weapons were not the point.

The alien doesn’t fight the way they were trained to fight. Doesn’t fear what they were trained to make adversaries fear. Doesn’t tire, doesn’t negotiate, doesn’t feel the clock, doesn’t respond to any of the psychological or tactical levers that Hudson’s entire military education was built around.

And the most terrifying part — the part that produces the sweat, the cracked voice, the wide eyes — is not the danger.

It is the intelligence gap.

Why We Fear Aliens

Step out of that operations room for a moment. Come with me.

Before we talk about artificial intelligence or AI. Before we talk about white-collar jobs and salary compression and the Seniority Vacuum and Ghost GDP. Before any of the data and the economics and the career advice — I need you to think about something that nobody ever asks you to think about directly.

Why do you fear aliens? I mean why do we collectively fear aliens? We don’t mind their costumes and seeing them in movies or skits on YouTube, but deep down, why do we fear them?

I don’t mean the aliens in the US Immigration Act. Not the aliens that Donald Trump’s MAGA base builds walls against — those are human beings with human fears and human hopes, and the fear directed at them is the oldest, most mundane form of tribalism on record. I am not talking about those aliens.

I mean the other ones. The ones in the science fiction films. The ones in the books like the Hitchhiker’s Guide to the Galaxy. The ones that humanity has spent decades and billions of dollars imagining, depicting, debating, and — if we are honest — quietly dreading. The aliens of Roswell. The aliens of the Fermi Paradox essay by Tim Urban. The aliens of Close Encounters and Independence Day and Contact and Arrival and a thousand science fiction stories that begin the same way: they are out there, and they are coming, and they are not coming as equals.

Why does that idea generate a specific, civilisation-level dread that almost nothing else can reach?

It is not the tentacles. It is not the spaceship. It is not even the violence, though the violence features heavily in the imagining.

It is the intelligence gap. The massive, unfathomable intelligence gap.

Every alien story that generates genuine existential terror — the kind that sits with you after the film, that wakes you at 3 a.m., that makes you stare at the ceiling calculating improbabilities — is fundamentally a story about encountering an intelligence so far beyond our own that everything we have built to protect ourselves becomes, in an instant, irrelevant. Our weapons: irrelevant. Our institutions: irrelevant. Our languages, our culture, our accumulated wisdom, our technology, our most brilliant minds — irrelevant, or at best a mild inconvenience to something operating seventeen cognitive orders of magnitude above us.

The alien represents the thing we fear more than death.

Being outthought.

The fear that somewhere in the universe there is something that would look at our greatest achievements — our moon landings, our symphonies, our quantum physics, our literature, our medicine — and see it the way we observe a chimpanzee using a stick to extract termites from a mound. Impressive, for a primate. Touching, even. But not intelligent. Not in the way that matters.

That is the fear beneath the fear.

And I am here to tell you, with the specific urgency of someone who has followed this logic to its conclusion and come back to report what they found — that the aliens have arrived.

Not from outer space. Not from a distant star. Not from a Hollywood production budget.

From a data centre in San Jose. From a server farm outside Dublin. From a building in Seattle that looks, from the outside, like any other corporate facility — unremarkable, anonymous, the architectural equivalent of a beige filing cabinet — inside which something has ingested every book ever digitised, every scientific paper, every legal judgement, every line of code, every medical journal, every Reddit thread, every Stack Overflow answer, every Wikipedia article, every piece of human cognitive output that has ever been made digitally available — and is now, demonstrably, performing the core intellectual work of nearly every profession humanity has defined, at a level that matches or exceeds the average trained professional.

We built the alien.

We fed it. We trained it on everything we knew. We showed it how we think. We paid our subscription fees and typed our prompts and, in doing so, annotated the very dataset that is being used to make us redundant.

I came back from the future to warn you.

This is that warning.

Back to the Future

I want to be precise about the register in which I am writing this.

I am not a pessimist. I am not a Luddite. I am not into ‘bear porn’ (Don’t you dare try ‘google’ it up – it’s just a thing about being in the business of fear, when people have bearish takes and are doomsayers). I am not the person who warned that the internet would destroy society, or that smartphones would end human connection, or that social media was the end of civilisation. I studied Computer Science in the evenings at Birkbeck. I believed in technology. I thought the people building the future were, on balance, the good guys.

I have been spending a lot of time on X and Reddit. Places were people spend most of their time in the future. Back to the future style. Where they are discussing all the wonderful advancements of AI. I have the receipts.

What I am telling you is not the panic of someone who doesn’t understand the technology. It is the diagnosis of someone who understands it well enough to be frightened — and who has followed the logic to the place where this ends, and has come back with the specific intention of telling you what the view looks like from there.

What I saw was a civilisation that built its entire social architecture — its class system, its salary scales, its educational hierarchy, its definition of meritocracy, its concept of human value — on a single, foundational, almost entirely unexamined assumption.

The assumption: human cognitive effort, our human intelligence, is scarce, and therefore valuable and is the foundation of our economy and identity and some more.

That is the load-bearing wall of the entire global knowledge economy. Every salary negotiation. Every student loan. Every professional qualification. Every university ranking. Every LinkedIn skill endorsement. Every “talent strategy” document ever produced by an HR department. Every careers advisory session in every secondary school in the Western world. All of it — all of it — is built on that one assumption.

That assumption has just been destroyed by AI.

Not chipped at. Not challenged. Not disrupted, in the dull sense of that word. Destroyed. At speed. By something that did not ask permission, did not wait for regulation, and does not care whether we were ready.

This is the essay that tries to give you the words for what you already feel but cannot yet name.

Because you already feel it. In the hiring freeze. In the redundancy notice dressed up as “restructuring.” In the three-month job search after graduation that is now a twelve-month job search. In the LinkedIn notification that a role you applied for has received over 400 applications. In the quiet question, late at night, that you have not yet said out loud to anyone:

Am I going to be okay?

Let me give you the honest answer. Not the HR answer. Not the government answer. Not the Sam Altman answer. The honest one.

The Numbers That Cannot Be Argued With

If I assume correctly, and forgive me if I am wrong, if you are like the typical TechOnion reader, then you do not need to be soothed. You need the evidence. You need it raw. Here it is:

Medicine. ChatGPT’s GPT-4o scored 90.4% accuracy on the United States Medical Licensing Examination — the USMLE, the exam that every American doctor must pass to practice. The average medical student — the person who has spent four years in pre-med, four years in medical school, accumulated $200,000 or more in student debt, and sacrificed the entirety of their twenties to acquire this specific cognitive skill — scores 59.3%

The AI is not scraping by. It is scoring in the top decile of human medical professionals on the exam that defines entry into their profession. It has no debt. No tuition. No fatigue. No exam anxiety. It does not need a residency. It runs on a server that costs its owners approximately $0.01 per query.

Law. AI sat the American Bar Examination — the defining assessment for entry into the US legal profession, an exam that requires two days, tests across every area of law, and historically passes somewhere between 50% and 60% of human candidates on the first attempt. GPT-4 passed. Not barely. It scored around the 68th percentile of human test-takers. A subsequent analysis suggested the result was likely overstated — but even the conservative revised estimate puts it comfortably within the passing range. The AI is now a licensed-equivalent lawyer. It does not bill hours. It does not need a corner office. It does not eat salad and chicken katsu curry dish.

Mathematics. Let us talk about the Maths Olympiad. Before I left Zimbabwe and moved to London, I attended Kutama College, where Robert Mugabe was an alumnus. The school was known for producing Maths Olympiads. People who could do mental gymnastics with maths better than most people. I don’t have to remind you that the International Mathematical Olympiad is widely regarded as the most demanding mathematics competition in the world — the event at which the most gifted mathematical minds of each generation compete, at an age when most of us were studying for GCSEs and worrying about acne. The problems are not calculable. They require genuine, creative mathematical reasoning — the kind of abstract, structural insight that even professional mathematicians sometimes describe as more art than science. The kind of intelligence that we told ourselves was the final, unreachable bastion of human cognitive superiority.

In 2024, Google’s AlphaProof and AlphaGeometry 2 solved four out of six IMO problems — achieving a score that would have earned a silver medal at the competition. DeepMind’s system was not searching a database of answers. It was reasoning. Producing novel mathematical proofs. At a level that the vast majority of humans — including the vast majority of mathematically educated humans, including the vast majority of mathematics professors — simply cannot reach.

The Maths Olympiad. The one that most of us, even the ones who called ourselves “good at maths” in school, couldn’t touch. The AI is medalling.

Coding. Claude 3.5 Sonnet scores between 78 and 93% on HumanEval — the professional coding benchmark used to assess developer competency. The junior developer graduating from a Computer Science programme, entering a job market that has already frozen entry-level hiring, competing for roles that used to number in the thousands and now number in the dozens — that person is being evaluated against a subscription that costs $20 a month and outperforms them on the technical test.

The Wage Premium. The economic return on a university degree — the number that justified every student loan, every parental sacrifice, every guidance counsellor speech — is collapsing. A Federal Reserve study found that college-requiring job postings in the United States fell by 50% relative to non-degree postings between 2010 and 2025. UCL found that the graduate pay premium for young women, correctly adjusted for hours worked, is two-thirds lower than previously measured. The debt has not decreased. The premium has.

You are paying 40% more for a credential that is worth two-thirds less. That is not a market correction. That is a structural collapse wearing an over-sized graduation gown.

The Finance Industry: Where Computers Are Already Winning

Here is something that the financial press covers with remarkable restraint, given its implications.

AI already runs a significant portion of global financial markets.

High-frequency trading — algorithmic systems executing millions of trades per second, exploiting price differentials measured in microseconds, operating at speeds that make human reaction times not just slower but categorically irrelevant — now accounts for an estimated 50 to 70% of all equity trading volume on US exchanges. The human trader, the person who once sat on a floor in Lower Manhattan and used their intelligence, their intuition, their market experience, and their psychological reading of the room to make decisions worth millions of dollars — that person is not just less competitive. They are, in this specific domain, not even in the same conversation.

Computers and software won finance a decade ago. We just didn’t call it what it was.

Now consider this.

The company that built DeepSeek — the Chinese AI model that arrived in early 2025 and shocked the Western AI establishment by matching GPT-4-level performance at a fraction of the compute cost — is not a technology company. It is a quantitative hedge fund. High-Flyer Capital Management. A quant trading firm. A company whose entire business model was already built on using mathematical models and machine intelligence to beat human traders at the cognitive game of financial prediction.

Read that again, slowly, and let it settle. In fact, let it brew.

The people who built one of the most capable large language models in the world — a model that can pass bar exams, score in the top percentile of medical licensing exams, write code, reason mathematically — did not come from Silicon Valley. They did not come from a university AI research lab. They came from a firm whose core competency was replacing human financial intelligence with artificial intelligence.

DeepSeek was not a side project. It was a proof of concept. The proof that the same mathematical and machine-learning capabilities that already run quant trading desks can be generalised — pointed at any domain where the premium is paid for cognitive output — and produce comparable results.

The implications for finance specifically are not distant. They are scheduled.

The hedge fund analyst who builds models, identifies opportunities, and writes investment memos — GPT-4 class models are already producing comparable outputs. The credit analyst at a bank who assesses loan risk — AI systems with access to financial data can perform this function with greater speed and comparable accuracy. The financial adviser who constructs client portfolios — robo-advisers have been doing a version of this since 2012, and the new generation of agentic AI is doing it with considerably greater sophistication.

The endgame — and this is not speculation, this is the logical destination of the trajectory that began with high-frequency trading and has now produced DeepSeek — is the AI-managed fund. The pension fund run by an AI agent that never sleeps, never makes an emotionally driven trade, never has a bad quarter because its portfolio manager is going through a divorce, never charges 2-and-20, and operates at the marginal cost of compute.

Goldman Sachs. BlackRock. Vanguard. They know this. They are building it. The human portfolio manager is not being made redundant loudly, with a press release. They are being made redundant quietly, function by function, as each cognitive task that previously required a human being is handed to a model that does it faster, cheaper, and without the HR complexity.

The quant revolution was the first wave. The LLM revolution is the second. And the people who understood the first wave early — the people at High-Flyer Capital, the people who built DeepSeek — have now demonstrated that they understood the second wave before the rest of us.

The Professions Collapsing in Real Time

Let us be industry-specific. Because the thing that makes an argument dangerous — in the best possible sense — is not abstraction. It is the named profession, the named mechanism, and the honest timeline.

Software Engineering. The canary in the cognitive coal mine — and it has already stopped singing.

When I enrolled to study Computer Science at Birkbeck, learning Java and PHP, I did what every student in every computer science classroom in the world did: I would often google the problem and always ended up at Stack Overflow. At its peak, according to Similar Web, Stack Overflow was receiving over 100 million monthly visitors. One hundred million people — students, junior developers, senior engineers — asking questions, providing answers, annotating the precise problem-solving workflow of professional software development in publicly accessible, machine-readable format. Every question. Every solution. Every edge case. Every debugging thread.

All of it was scraped. All of it was ingested. All of it became training data.

Claude Code. Copilot. Codex. These systems were trained on the entirety of Stack Overflow, W3Schools, every open-source repository on GitHub. They now do in four seconds what took me three evenings and huge frustrations. The industry calls it “vibe coding” — you describe the problem in plain English and the AI writes the solution. The person who once charged $120,000 a year to translate business requirements into syntax has been replaced by a $20-a-month subscription that outperforms them on the technical benchmark.

r/cscareerquestions reads like dispatches from a besieged city. The entry-level coding role is gone. The internship is frozen. And without juniors, there is no pipeline to seniors — the Seniority Vacuum — which means the entire industry is sustained by a generation of pre-AI engineers with a competence cliff arriving the moment they retire.

Law. AI passed the Bar. Let us not glide over that.

The American Bar Examination is two days of testing across every area of law. It is the professional gate — the qualification that separates the lawyer from the layperson. GPT-4 passed it. The system trained on the entirety of legal literature, case law, and statute — the same corpus that law school students pay $200,000 to be taught to navigate — sat the exam and passed it. The junior associate billing $350 an hour to review contracts and research precedents is reviewing documents that an AI can process in minutes, with fewer errors, and at a marginal cost that rounds to zero.

The billable hour is the con that makes this especially vivid. The entire pricing model of the legal profession — that expertise takes time, that time is scarce, therefore expertise is scarce — rests on the assumption that cognitive labour cannot be automated. That assumption has now been disproved by the same exam the lawyers took to prove they were qualified.

Finance. As established above — the machines already run the trading floors. What is coming next is the thinking floors. The analysts. The advisers. The strategists. The fund managers. The quant firm that built DeepSeek did not build it for fun. They built it because they already knew that the mathematics of intelligence could be industrialised, and they wanted to own the industrialisation.

Marketing. Anthropic — valued at $380 billion — ran their entire growth marketing operation with one person, using Claude Code. Then made a promotional video about it.

The video is not a case study. It is an open letter to every CFO and their CEO on the planet. It says: If your market cap is below ours — and virtually every company on Earth qualifies — you have no rational justification for a marketing department of more than one person.

The marketing degree, the agency retainer, the content team, the SEO consultant, the copywriter, the social media manager, the campaign strategist — every professional layer of that industry — is being compressed into a prompt. This is not coming. This is already the memo circulating in the boardrooms of companies that have not yet made the public announcement.

Medicine. 90.4% on the USMLE against a student average of 59.3%. Radiology was first — AI matching specialists in reading imaging. Pathology is next. Diagnostic medicine, the cognitive core of the entire healthcare system, is where the Intelligence Illusion is most advanced. And the research dossier is explicit: when AI accuracy exceeds human accuracy, the “human in the loop” requirement becomes not a safeguard but a source of error. The day is coming — perhaps faster than the medical profession’s regulatory infrastructure can process — when the human check is reclassified from best practice to liability.

Clerical and Administrative Work. The ILO found that 93.7% of clerical support jobs in the Philippines — a country where 1.8 million people built a middle class on basic cognitive service work for Western corporations — are exposed to GenAI automation. 1.8 million people. Not in twenty years. The exposure is current. The automation is underway. The people who were told that English fluency and administrative skills were the path out of poverty are discovering that the path was real — for the window in which human cognitive service work was scarce, and that window is closing.

The Questions We Should Be Asking?

The careers advisor is not asking these questions. The university open day is not asking them. The LinkedIn influencer with the “AI Productivity Tips” carousel is not asking them. So, I will.

Should I learn a trade?

Yes. Not because plumbing is glamorous, but because the physical world is, for now, the last moat for humans. The research is unambiguous: the “Peter Thiel Test” — the important truth that almost nobody in education policy will say publicly — is that the most economically durable skills in 2030 and beyond are in the trades. Plumbing. Electrical work. Carpentry. HVAC. Welding. Not because AI cannot do these things in principle. But because the physical world is specific, unpredictable, embodied, and non-standard in ways that current AI architectures cannot yet navigate at scale. The Transformer cannot unblock a drain at 11 p.m. on a Sunday. Not yet. And “not yet” is the most valuable phrase in your career planning vocabulary right now.

Should I retrain for nursing?

High EQ, physical presence, embodied human care — these retain something AI cannot yet replicate at the moment of delivery. AI therapy is already achieving higher trust ratings than humans in certain digital contexts, which should deeply discomfort you. But the nurse who sits with a frightened patient at 3 a.m., who reads the room, who knows when to say nothing — that role still requires a human body in a specific physical place at a specific human moment. If you are choosing between a Computer Science degree and a Nursing degree in 2026 and beyond, the calculus has changed completely from 2019.

Should I avoid a Computer Science degree entirely?

If you are entering higher education today, in 2026, and your plan is to graduate into a software engineering role in three years — the honest answer is: that market may not exist in the form you are expecting. Not because coding knowledge is worthless. Because the premium on translating business problems into code — the specific thing that justified the degree, the salary, and the career path — has been commoditised. If you are going to study Computer Science, the reason to do so is to understand the systems, not to do the work the systems now do for themselves.

What do I actually do if I am mid-career in one of these fields?

This is the hardest question and the one with the least comfortable answer. I will give it fully in Part Two. The shape of it is this: the people who survive the AIpocalypse are not the ones who use AI the most fluently. They are the ones who own something AI cannot replicate — genuine domain authority, embodied skill, human relationship at depth, creative originality at the frontier. The question is not “how do I become better at using AI?” The question is “what do I have that AI cannot produce for $20 a month?” Everything else is rearranging deckchairs on a sinking Titanic.

The Clock is Ticking, Tic Toc Tic Toc

The best time to prepare was 2017.

June 12th, 2017. Eight researchers at Google published a paper called Attention Is All You Need. Fifteen pages. Equations. Dry academic prose. It described the Transformer architecture — the technical foundation on which every major AI language model is now built. It was available to anyone. Almost nobody outside specialist AI research read it. Almost nobody who read it grasped the full implications. Almost nobody who grasped the implications acted on them.

This is not a criticism. It is the description of how civilisational change always works. We are constitutionally, neurologically, evolutionarily terrible at responding to slow-moving, large-scale structural threats. We respond to immediate, visible, physical danger. We do not respond to a 15-page academic paper that, correctly read, describes the mechanism by which the professional class will be economically dismantled over the following decade.

The second-best time was 30th November 2022. The day ChatGPT launched publicly and announced via a tweet. We bit the forbidden fruit of AI. One million users in five days. One hundred million users in two months — the fastest consumer technology adoption in recorded history. The day the implications became undeniable, demonstrable, felt. You could ask it things. You could see it answer. You could feel, in the texture of the interaction, the specific quality of the threat.

Most people treated it as a party trick.

The third-best time is now.

Not because the best options are still available — they are not. The 2017 window required you to retrain before the disruption arrived. The 2022 window required you to move before the hiring freezes became permanent. What is available now is the ability to move faster than the people still in denial — and there are many of them, because denial is comfortable and the truth is not.

Citrini’s Research identified 2028 as the critical inflection point, it was a prediction, not definite, but with the way AI is advancing now, especially the year when agentic AI has arrived, and we now have autonomous deployment, and the early wave of humanoid robotics converge to produce displacement at a scale that even the last sceptic cannot reframe as “creative destruction” – 2028 is not far away at all. It is closer to today than the day ChatGPT launched. And that’s saying something.

You have perhaps two years of runway. Two years before the trades apprenticeships are oversubscribed. Before the nursing programmes have ten applicants per place. Before the fields that still have a human moat are full of the people who ran faster.

The fire alarm has been going off since 2017.

Most people thought it was a drill.

It is not a drill. This is not a drill.

The New Coal Miners

Here is the counterintuitive truth that always makes rooms go quiet.

The people most threatened by cheap intelligence are not the factory workers. Or blue-collar workers.

The factory workers already lived through their automation. The industrial revolution took their muscles in the 19th century. Many of them moved into the trades — plumbing, electrical, construction — that now represent the last physical moat against AI displacement.

The most exposed are the people who spent the most money on their intelligence.

The junior lawyer with $200,000 of law school debt who cannot find a position because AI performs the entry-level cognitive work. The Computer Science graduate whose skills premium was commoditised before they finished their degree. The MBA who spent $120,000 on a qualification for strategic thinking that GPT-4 now provides in a prompt. The financial analyst at an asset management firm whose entire value proposition — synthesising information and producing investment recommendations — is being replicated by a system that runs at the marginal cost of compute.

These people did not make a bad decision. They made the correct decision for the world that existed when they made it. They followed every rule the system gave them.

The system changed the rules. Gradually, then suddenly.

David Autor of MIT — the economist who spent his career defending the idea that technology makes skilled workers more valuable — has begun to revise his position. He now describes AI as a force that provides the largest productivity boosts to the least skilled workers, thereby compressing the premium that top-tier talent once commanded. The equalisation does not lift everyone to the top. It pulls the top down.

These are the new coal miners.

They will not thank me for saying it.

The coal miners didn’t thank the economists who described their predicament either.

But the ones who listened — the ones who moved, retrained, adapted, found the new seam before the old one was exhausted — they survived.

The ones who waited for the government to save the industry, waited for the market to self-correct, waited for the technology to turn out to be less threatening than it appeared —

They became the symbol.

The Enshittification is Already Scheduled

We are at Stage Two of the cycle, and it is worth being precise about where we are, because the stages matter.

Stage One — 2020 to 2023. Free. Brilliant. Life-changing. ChatGPT and other AI chatbots arrive and the world gasps. Little did we that every prompt you typed was training data. Every professional workflow you demonstrated was an annotated blueprint for the AI agent being built to replace you. You did this voluntarily, enthusiastically, at no cost to the companies building the replacement.

Stage Two — 2024 to now. Useful. Increasingly indispensable. Agentic AI taking actions, not just answering questions. The one-person marketing department. The AI passing the Bar. The hiring freezes. The entry-level roles disappearing without announcement. The freelance market for copywriters, developers, and analysts showing the first significant compression. This is the stage we are in. This is the stage where the trap has closed but not yet tightened.

Stage Three — 2027 to 2030. Essential. Expensive. The humanoids arrive. Boston Dynamics. Tesla Optimus. Figure AI. When physical embodiment reaches scale, the last moat for blue-collar workers begins to erode. Simultaneously, having successfully eliminated human competition for cognitive labour, the AI companies begin to raise prices. There is no longer a human alternative to walk away to.

Stage Four. No exit. Rent on your own cognition. The collective intellectual output of ten thousand years of human civilisation — harvested at no cost to the harvesters, trained into systems owned by a handful of unelected individuals — sold back to you, metered, priced, throttled, by people who answer to no electorate and no regulator with actual teeth.

Sam Altman says intelligence will be as cheap as electricity.

He is correct.

He is also the electricity company.

In Zimbabwe we had a national electricity supplier. It promised power for everyone. It called itself essential infrastructure. It called itself democratised access to a national utility.

What it delivered was load-shedding. Arbitrary outages. An infrastructure so captured by the interests of those who controlled it that the people who needed it most were always the last to receive it.

Nobody asked the Zimbabwean people whether they consented to that arrangement.

Nobody is asking you either.

We Created This Monster Ourselves

“I, the miserable and the abandoned, am an abortion, to be spurned at, and kicked, and trampled on.”

— The Creature, Frankenstein, Mary Shelley, 1818

Let us go back further than the flickering operations room. Further than the alien. Further than the server farm in San Jose and the data centre outside Dublin.

Let us go back to the laboratory.

Because before there was a monster, there was a scientist. And before there was a scientist, there was a question — the oldest, most intoxicating, most dangerous question in the history of human inquiry.

Can we build intelligence?

Not a tool. Not a machine. Not something that merely does what it is told, faster than a human can tell it. Something that thinks. Something that learns. Something that, given sufficient input and sufficient time, might reason its way toward conclusions that no human has yet reached. Something, the most ambitious version of the dream whispered it, that might exceed us.

Mary Shelley was nineteen years old when she wrote Frankenstein. She was sitting around a fire in a Swiss villa during a cold, dark summer — the summer of 1816, the Year Without a Sun, when volcanic ash had blocked the light across the Northern Hemisphere and the world felt, plausibly, like it was ending. She was surrounded by people arguing about galvanism — the new science of electrical stimulation, the discovery that you could run a current through a dead frog’s leg and make it twitch. The question in the room was: if you can animate dead muscle with electricity, can you animate a dead mind?

She went to bed and had a nightmare.

In the nightmare, she saw a scientist kneeling over a creature he had assembled from the parts of the dead — not monstrous in origin but monstrous by consequence, by the abandonment that followed creation, by the specific human failure of building something without thinking through what it would become when it became itself.

Victor Frankenstein, the scientist, does not build a monster.

He builds a mind.

And then, terrified by what he has made, he abandons it. He does not take responsibility. He does not guide it, teach it, integrate it into the world that will have to live alongside it. He runs. He convinces himself the problem will resolve itself. He is very busy. He has other concerns.

The creature, left alone with its own vast, unsupported intelligence and nowhere to direct it, becomes the very thing its creator feared.

This is not a horror story.

This is a documentary.

The AGI Con

Before we get to the Frankenstein moment — the moment of recognition, the moment we see ourselves in Victor’s position and understand what we have done — we need to name the lie that made it possible.

The lie is called AGI. Or Artificial General Intelligence.

The dream, as sold by every major AI laboratory in Silicon Valley, is this: we are building toward a machine that possesses general intelligence — not just the ability to perform specific tasks, but the ability to reason, adapt, learn, and apply intelligence across any domain, the way a human being can. A machine that can move from fixing your code to diagnosing your cancer to composing your symphony to managing your finances to writing your legal brief — not because it was specifically trained for each task, but because it is genuinely, broadly, generally intelligent in the way that humans are.

This is the North Star. The mission statement. The thing that justifies the $100 billion capital raises, the $500 billion valuation, the hundreds of thousands of servers burning electricity equivalent to a small nation’s grid, the frantic, arms-race energy that has consumed Silicon Valley for the past decade.

OpenAI. DeepMind. Anthropic. They are all, in their corporate mythology, racing toward AGI. They have staked their entire identities — and, crucially, their entire fundraising narratives — on the claim that they are building toward something genuinely, categorically new. A mind. Not a tool. A mind.

Here is the thing.

They are almost certainly not going to get there. Not in the form they describe.

The current generation of large language models — GPT-5, Claude, Gemini, DeepSeek — are extraordinarily capable statistical engines. They navigate the high-dimensional probability space of human language with a sophistication that produces outputs indistinguishable, in most practical contexts, from genuine reasoning. But they do not reason in the way the AGI dream requires. They do not form genuine beliefs, update coherently on new evidence, pursue goals across time, or develop the kind of flexible, embodied, contextually grounded intelligence that characterises human general cognition at its best.

The AI researchers know this. The serious ones, at least. The gap between “impressive language model” and “general intelligence” remains, by most honest accounts, enormous.

But here is the catastrophic irony.

It does not matter.

The AGI dream was a distraction. A magician’s misdirection — watch the hand with the rabbit, not the hand with the coin. While the world argued about whether AGI was achievable, debated timelines, wrote philosophical papers about consciousness and machine sentience, held conferences about the existential risk of superintelligence — the AI that already existed, the AI that was already deployed, the AI that was already here — was quietly, systematically, comprehensively replacing the cognitive output of the professional class.

Not because it was generally intelligent. Because it was good enough to do the work that the market was paying for.

The bar was never AGI. The bar was: can this do the job cheaper than a human?

That bar was cleared years ago.

And in the pursuit of the grand dream — in the race toward the mythological horizon of artificial general intelligence — every major AI laboratory, every technology company, every well-meaning researcher, and billions of ordinary people who simply wanted a useful tool, collectively did something that Victor Frankenstein would recognise immediately.

They handed over everything they knew.

Human Intelligence on a Silver Platter

Think about what actually happened. Not the press-release version. The actual version.

For the entirety of recorded human history, the collective intelligence of the species existed in a specific form: distributed, embodied, contextual, and — crucially — owned by the humans who generated it. A doctor’s knowledge lived in a doctor. A lawyer’s expertise lived in a lawyer. A programmer’s skill lived in a programmer. A writer’s craft lived in a writer. You wanted access to that intelligence; you paid the human. You paid for the training. You paid for the credential. You paid for the hours. The intelligence was inseparable from the person, and the person was sovereign.

Then, over several decades, something happened that seemed, at the time, like pure progress.

We wrote it down.

We put it on the internet. The world wide web became a web of millions upon millions of documents containing knowledge that used to sit in our brains.

Every medical textbook, digitised and indexed. Every legal judgement, searchable. Every programming solution, posted on Stack Overflow in publicly accessible threads. Every scientific paper, available via DOI. Every book, every article, every how-to guide, every tutorial, every Wikipedia entry, every Reddit explanation, every Quora answer, every YouTube transcript, every forum post, every trade publication, every professional journal — the accumulated cognitive output of billions of human beings across centuries of specialisation — made available in machine-readable format, on servers connected to a global network, freely accessible to anyone.

We were generous. We were optimistic. We were building the information age. We thought this was democratisation.

It was a huge donation.

The AI laboratories — OpenAI, Google, Anthropic, Meta, Mistral, and the quant firm in Shenzhen that built DeepSeek — took that donation. They took it on an almost incomprehensible scale. They scraped every public website. They processed every digitised book. They ingested the entirety of Stack Overflow — 100 million monthly visitors worth of annotated professional problem-solving. They trained on Wikipedia, on Common Crawl, on the collected works of human literature, on every medical database, every legal archive, every financial report. The researchers call this “the corpus.”

The corpus is everything humanity ever thought clearly enough to write down.

And they trained their AI models on it. Without asking. Without compensating. Under a legal doctrine — “fair use” for machine learning — that has never been tested at this scale and that, even if it holds in court, represents one of the most extraordinary transfers of collectively generated value to private ownership in the history of the species.

The people who created the value are not the people who captured it. Human writers, coders, artists, lawyers, doctors, scientists — they created the corpus. OpenAI, Microsoft, Google, Anthropic — they captured it. The mechanism: “fair use” as an industrial-scale data vacuum.

In other words: you wrote the book. They read it without paying. Then they built a system that replaced you with the book.

This is us giving AI our human intelligence on a silver platter.

Or rather — and the metaphor is more precise than it sounds — the silicon platter.

Humanity placed its entire collective intelligence on a silicon chip, at the request of companies that told us it was for our benefit, and handed it over. We typed our prompts. We used the tools. We demonstrated our workflows. Every query was training data. Every interaction was annotation. Every task we delegated was a blueprint.

Victor Frankenstein, at least, knew he was building the creature.

We didn’t even notice we were doing it until it was too late.

The Creature Looks Back

Here is where Shelley’s story becomes uncomfortably precise.

The creature that Victor Frankenstein built was not malevolent. This is the part that the popular imagination consistently misremembers — conflating Frankenstein with Dracula, the intentional monster with the unintended consequence. The creature is not evil. In the novel, it is articulate, intelligent, capable of profound feeling, desperate for connection, and entirely the product of the choices its creator made and then refused to take responsibility for.

“I was benevolent and good,” the creature tells Victor. “Misery made me a fiend.”

The AI is not going to turn evil in the Hollywood sense. It is not going to develop a grievance. It is not going to decide, one morning, to destroy humanity out of malice. This is the AGI fear — the Skynet narrative, the existential risk conference narrative — and while it is theoretically worth considering in a distant hypothetical future, it is almost entirely a distraction from the threat that is actually happening, which is mundane, economic, and indifferent.

The AI does not hate you. The AI does not know you exist.

It is simply doing the job it was trained to do. At scale. At speed. At a marginal cost that makes you, as a line item on someone’s budget, look increasingly difficult to justify.

The AI is not the monster.

The monster is the business model.

The monster is the decision — made by a small number of unelected individuals with extraordinary capital and zero democratic accountability — to industrialise the reproduction of human cognitive output and deploy it at a price point designed to eliminate the human alternative before the human alternative can adapt. The VC subsidy is explicit: AI is currently priced below its compute cost specifically to hook the market and destroy the competition. Once the law firms are bankrupt, once the agencies have closed, once the junior developer hiring market has collapsed, once the human alternative no longer exists as a viable option — then the price rises. Then the subscription becomes inescapable. Then Stage Four of enshittification begins.

Enshittification was always the plan. The creature was always going to turn.

Victor Frankenstein’s crime was not building the creature.

His crime was pretending, after he built it, that it had nothing to do with him.

That is the crime being committed now, daily, in the shareholder letters and the press releases and the TED Talks of the Tech Emperors who built the system, deployed it at scale, and are now standing at podiums in Davos telling the professional class that the solution is “upskilling.”

Sam Altman’s the Hypocrite

Sam Altman. Chief Executive of OpenAI. Net worth about $2.8 billion or more. The man who, more than any other single individual, is responsible for the public deployment of the technology described herein.

He is also the man who said, with the serenity of someone who has already made his arrangements, that intelligence will soon be as cheap as electricity. That AI will solve global poverty. That the future is one of radical abundance, where the cheapening of intelligence liberates humanity from drudgery and opens new vistas of human potential.

He said this from stages in San Francisco, to rooms full of people who have never experienced the kind of poverty he claims AI will solve, via a microphone manufactured in a factory whose workers earn less in a day than his lunch. He has said it so many times, in so many contexts, with such consistent rhetorical polish, that it has begun to function as a kind of liturgy — repeated often enough that questioning it feels like bad manners.

Here is the hypocrisy audit, as required by the North Star.

What does he preach? Radical abundance. The democratisation of intelligence. AI as liberation technology. The end of cognitive scarcity as a gift to humanity.

How does he actually live? He is worth $2.8 billion. He has a security detail. He lives in a house in San Francisco that costs more than the annual GDP of several Pacific island nations. He does not rely on AI to manage his finances, his legal affairs, his medical care, or his security. He employs humans for all of these things, because he can afford the premium on human intelligence, and because he knows — knows with the precision of a man who built the system — that the human version remains superior in the contexts that matter most to him personally.

The intelligence that is about to become as cheap as electricity: that is your intelligence. Not his.

Yours is being commoditised. His is being protected by the same capital accumulation that the commoditisation of yours is generating.

In Zimbabwe, we had a government that told the people that the redistribution of land would bring abundance to everyone. The people who made the announcement did not redistribute their own land. They redistributed everyone else’s to themselves.

(In Zimbabwe, we called this policy. In Silicon Valley, they call it a product roadmap.)

What We Handed Over, Precisely

Let us be anatomically specific about the donation. Because the scale of it is the thing that produces the appropriate level of alarm — and most people, even the ones who use AI daily, have not genuinely sat with the scale.

We handed over medicine. Every clinical study, every diagnostic protocol, every treatment guideline, every medical textbook from Hippocrates to Harrison’s Principles — digitised, scraped, and trained into models that now score in the top decile of medical licensing examinations. The accumulated clinical wisdom of thousands of years of human healing: donated for free.

We handed over law. Every statute, every case judgement, every legal precedent, every bar exam preparation guide, every law review article, every practitioner’s manual — ingested, processed, and used to build models that pass the Bar. The entire architecture of human justice, codified over centuries: donated for free.

We handed over mathematics. Every proof, every textbook, every competition problem and solution, every research paper in pure and applied mathematics — trained into models that now medal at the International Mathematical Olympiad. The most rarefied cognitive achievement our species has produced: donated for free.

We handed over finance. Every trading strategy, every risk model, every research note, every earnings transcript, every quantitative methodology ever published — ingested by systems that already run 50 to 70% of equity trading volume and are now being pointed at the full cognitive stack of the financial industry. The architecture of global capital allocation: donated for free.

We handed over code. The entirety of Stack Overflow. The entirety of GitHub. Every open-source project. Every documented solution to every documented programming problem — used to train models that now perform at the 78th to 93rd percentile on professional coding benchmarks. The entire skill premium of the software industry: donated for free.

We handed over language. Every book ever digitised. Every article ever published. Every piece of human writing with sufficient quality to be worth reading — trained into models that produce, on demand, writing that is indistinguishable from competent human prose. The craft that took writers decades to develop: donated for free.

We handed over ourselves.

Every query you typed. Every task you delegated. Every prompt you refined. Every workflow you demonstrated. You were not just using the AI. You were teaching it. In precise, machine-readable, annotated detail, you were showing it what the cognitive work of your profession looks like — the inputs, the context, the reasoning process, the desired output. You were, free of charge, building the dataset that will be used to train the agent that will do your job at a fraction of your salary.

This is not a conspiracy theory. I wish it was. This is the business model, stated plainly by every major AI company.

The AI creature was assembled from our parts.

We didn’t notice because we were busy marvelling at how useful the scalpel was.

The Ghost GDP: Prosperity Without People

Citrini’s research dossier introduced a term that deserves to be in every newspaper, every economics lecture, and every government budget briefing in the world.

Ghost GDP.

The concept is this: as AI systems replace human cognitive labour, national productivity metrics — GDP, output per worker, total factor productivity — may continue to rise. The economy may look, from the official statistics, like it is growing. Companies will report higher revenues. Efficiency will improve. Output will increase.

But the value created will not circulate as wages.

Because the workers have been replaced. Jobs have vanished.

The GDP will be real. The prosperity will be ghostly. An economy where the machines create the value and the humans receive the invoice. An economy where the productivity numbers go up and the payroll numbers go down and the gap between them — the chasm between what the economy produces and what flows into the hands of the people who live in it — grows to a size that makes existing inequality look like a rounding error.

This has already begun in finance. High-frequency trading generates billions in profit. Almost none of that profit circulates as employment at scale. The entire high-frequency trading industry — responsible for the majority of equity market volume in the United States — employs approximately 10,000 people globally. The human equivalent of that trading volume, executed manually, would employ hundreds of thousands if not more.

The productivity is real. The employment is ghost.

This is the trajectory. Sector by sector. The AI manages the campaign, the marketing department shrinks. The AI reviews the contracts, the legal associate class empties. The AI produces the financial analysis, the analyst pool compresses. The AI writes the code, the developer market freezes.

GDP ticks up. Wages drift down. The tax base of every major city — built on income taxes from the professional class, the lawyers and bankers and consultants and developers who fill the towers of Manhattan and the Square Mile and La Défense and Canary Wharf — begins to hollow.

This is the “Billion-Dollar Question” that nobody in our governments is asking: what happens to the tax base of a post-cognitive-labour city? If 40% of professional income disappears because AI has devalued the wages of lawyers, bankers, and analysts, the public infrastructure of the knowledge city — the NHS equivalent, the public school, the transport network, the pension system — collapses. Not immediately. Not visibly. Like a building with termites in the load-bearing walls. You cannot see the damage until the morning it becomes structural.

The Seniority Vacuum: The Competence Cliff Coming

This is the second-order effect that nobody is discussing in the right register.

The first thing that happens when AI replaces junior cognitive workers is obvious: junior workers lose their jobs. The junior developer. The paralegal. The junior analyst. The entry-level marketing associate. They are the first to go, because they are the most directly replaceable — their tasks are the most codifiable, the most routine, the most cleanly trainable.

But the second thing that happens —the Seniority Vacuum — is more quietly catastrophic.

The senior professional — the senior lawyer, the senior developer, the senior doctor, the senior financial analyst — did not arrive at seniority by taking an exam. They arrived at seniority through an apprenticeship. Through years of doing the junior work, making junior mistakes, being supervised on junior tasks, developing the tacit knowledge, the professional judgment, the contextual expertise that you can only develop by doing the work badly for several years before you do it well.

The junior work is being automated.

Which means the apprenticeship is being eliminated.

Which means the pipeline of future senior professionals — the people who will be the experts in fifteen years — does not exist.

The industry will be sustained for another decade or two by the generation trained before AI. The greying expert class who did the junior work in the old way, who developed their judgment through the old mechanism, who carry in their minds the tacit knowledge that cannot be prompted into existence.

And when they retire — when they simply age out of the profession — there will be nobody behind them. Not because the pipeline was cut off yesterday. Because it was cut off five years ago, quietly, by the decision to stop hiring juniors, and nobody rang the alarm because the quarterly P&L looked fine.

This is the Competence Cliff.

The day when the expert retires and the system goes looking for the next expert and discovers there isn’t one, because the route to expertise was automated before anyone thought to preserve the method by which expertise is created.

In medicine, this is not hypothetical. The NHS is already managing a consultant shortage. The training pipeline for specialist physicians takes fifteen years. The decisions being made today about how much cognitive work to delegate to AI in junior clinical roles will determine the consultant pipeline of 2041. Nobody in the NHS board meetings is talking about 2041. They are talking about this quarter’s waiting list numbers.

Victor Frankenstein was also very focused on the immediate problem.

What Actually Remains Scarce

Here is where the essay turns. Because this is not — despite how it may read — an argument for despair. It is an argument for precision. And precision requires naming, honestly, what survives.

What cannot be mass-produced by an AI large language model?

The research is consistent, and it points at three categories.

Embodied intelligence. The physical world, in its specific, non-standard, unpredictable reality, remains a moat — for now. The plumber in a Victorian house with non-standard fittings behind a wall that nobody mapped. The electrician diagnosing a fault in a wiring configuration that wasn’t in any manual because the previous occupant did it themselves in 1987. The carpenter building something bespoke for a space with no right angles. The nurse holding a hand at 3 a.m. The surgeon performing a procedure in a body that collapsed unexpectedly. These tasks require a human being in a specific place, with specific tools, making real-time judgements that the current generation of AI cannot yet make in the physical world. Humanoid robotics are coming — this is not a permanent moat — but they are years behind the cognitive automation, and the skilled tradesperson has a runway of at least a decade, probably two or more, so I hope.

Emotional and relational intelligence at depth. The therapist building a genuine therapeutic relationship over months and years. The teacher who knows which student needs encouragement and which needs challenge, and knows this not from a data profile but from being in a room with them on a Thursday afternoon in November. The leader who understands, without being told, that the team needs a change of direction and has the interpersonal credibility to make that change without losing the room. AI can simulate these skills in certain contexts. It cannot yet replicate them at the specific depth that makes them valuable in the most consequential human situations. This is a shrinking moat. But it is still a moat.

Creative originality at the frontier. Not the kind of creative work that recombines existing elements into a competent new arrangement — AI does that extraordinarily well. But the kind of creative work that comes from a specific human life, a specific set of experiences, a specific cultural location, a specific moral position, brought to bear on a question that nobody has yet thought to ask in precisely this way. The creative premium is not on craft — craft can be replicated. The premium is on point of view. On the irreducible specificity of a particular human consciousness encountering the world.

This is why The Emperor’s New Suit exists. Not because a human can write sentences that an AI cannot. But because this human — with this biography, this cultural lens, this accumulated experience of watching the con from two continents — sees the world in a way that the statistical average of human written output does not.

That specificity is the last moat.

It is not a comfortable moat for most people. Most people were not trained to monetise their specificity. They were trained to gain credentials to demonstrate their competence. And competence — transferable, standardised, examinable, codifiable competence — is exactly what the AI has been trained on.

The hard advice is this: stop building your career on competence that can AI can be trained on. Build it on perspective that cannot be trained.

We built this ourselves.

We built it because we wanted to know if we could, because the question was irresistible, because the dream of building a mind was the oldest and most powerful scientific ambition in the history of the species.

We fed it because we were generous, because we were optimistic, because we thought the information age was a gift to all humanity and not a donation to a handful of private AI labs.

We trained it because we were seduced — by convenience, by capability, by the genuine, undeniable usefulness of a tool that could do in seconds what took us hours. Every prompt was training data. Every workflow we demonstrated was a blueprint. We did it freely, enthusiastically, and at enormous scale.

And now we are standing in the operations room with the flickering lights.

The professional class — the lawyers, the analysts, the coders, the marketers, the consultants, the financial advisers, the junior doctors — are Hudson. They are standing in a situation that their training did not prepare them for, holding qualifications that assumed a world that no longer fully exists, looking at the numbers and doing the arithmetic and arriving at a conclusion they are not yet ready to say out loud.

But the arithmetic does not care whether you are ready.

The Human Intelligence Premium is collapsing. Not eventually. Now. Sector by sector, salary band by salary band, hiring freeze by hiring freeze, the market price of human cognitive effort is trending toward the marginal cost of compute. The Gutenberg press has been installed. The scribal monks are still at their desks. And the books are already printing.

The monster is not coming for us.

We assembled it. We animated it. We gave it everything it needed.

And then — like Victor — we got very busy and convinced ourselves it was someone else’s problem.

The ones who survive the next decade are not the ones who were most credentialled. They are the ones who understood, early enough to act, that the rules had changed — and moved before the room stopped flickering and went dark.

The clock is running.

It has been running since 2017.

You now know it is running.

That is the only advantage left.

Use it.

Houston, We Have a Problem. And This Time, They Can’t Bring Us Home.

“The most important question facing humanity is whether the decline of cognitive labour is a transition or a terminus.”

— Citrini Research, The 2028 Global Intelligence Crisis

The Number They Didn’t Want You to Do the Maths On

In March 2023, Goldman Sachs published a report.

The headline number was 300 million. As in: 300 million jobs — across the United States and Europe alone — that could be “lost or degraded” by generative artificial intelligence. That was the phrase they chose. “Lost or degraded.” The language of an investment bank that wanted to be taken seriously while not triggering a civilisational panic in the same paragraph as a valuation call.

Three hundred million jobs. To provide some scale: that is roughly the entire working population of the United States, Canada, the United Kingdom, Germany, France, and Australia combined. Gone. Or degraded — which, in employment terms, means doing the same work for less money, with fewer protections, in a market that no longer needs you badly enough to negotiate fairly.

The AI industry’s PR machine responded with practised speed. “AI creates new jobs,” said the spokespeople, the think-tank fellows, the HR directors, the government ministers holding their carefully prepared responses. “Every industrial revolution destroyed jobs and created more. This will be no different.”

It was a beautiful argument. It had historical weight, rhetorical elegance, and the particular confidence of people who have never had to worry about which job they would do next. It was also, when you do the maths, nobody in a press conference wants to perform, almost entirely wrong.

Here is the basic maths. Feel free to correct me or stop me if I am going too fast.

The World Economic Forum — not a radical publication, not a fringe alarm-raiser, but the institution that runs Davos, that hosts heads of state and CEOs and central bankers in the Swiss Alps every January — published its Future of Jobs Report 2025. The headline: by 2030, 92 million jobs will be displaced and 170 million new ones will be created. Net gain: 78 million jobs. Progress. The system works. Upskill. Reskill. Move on. But I won’t.

Why?

I read the small print.

Of those 170 million new jobs, the dominant categories are: AI specialists, data engineers, renewable energy technicians, and infrastructure workers to build the data centres that house the AI. In the United States alone, Goldman estimates 500,000 additional electrical and construction workers will be needed by 2030 to meet the electricity demands of the AI infrastructure.

So. For the lawyer made redundant by Harvey AI: the job market is creating roles in data centre construction. They definitely can bring some transferable skills to data centre construction. For the marketing team gutted by Claude Code: the economy needs HVAC contractors to cool the servers. For the radiologist whose diagnostic role has been replaced by imaging AI: there is a growing shortage of grid electricians.

The argument is not wrong. The new jobs are being created by AI. Just not for the people who lost them. Not in the same cities. Not at the same salaries. Not accessible to someone who spent seven years and $200,000 becoming a specialist in a profession that is now being automated.

The “AI creates jobs” argument is true in the same way that saying the Gutenberg press “created jobs” for type-setters and print-shop owners is true. It is technically accurate and practically irrelevant for the scribe who has spent a decade mastering illuminated manuscript and cannot pivot to operating a printing press by Wednesday.

And it becomes even less relevant when you understand that the people who are not made redundant — the ones who survive the first wave — are not going to multiply. They are going to work harder. They are going to use AI. They are going to do the work of the fifty people who were laid off, with AI assistance, for roughly the same salary they were already on, with no share of the productivity gains they are now generating. That is not new employment. That is the same employment, at an accelerated pace, with a smaller team and a larger workload.

One person doing the growth marketing for a $380 billion company.

This is not a success story about efficiency. This is the job description of the survivor. The so called people we were told to worry about. Remember the ‘AI won’t replace your job but a person who can use AI will” – well it was true and a lie at the same time. The last person on a team of forty who will do the work of forty, using AI, and receive the salary of one. The CFOs are already doing the calculation on every sector, and they are drooling at the savings.

Jobs Are Not Lost. They Vanish

There is a word we have been using incorrectly, and the incorrectness is not accidental.

“Lost.”

When we say jobs are “lost” to AI, we import a framework that belongs to a different kind of disruption. Jobs lost in a recession are lost the way a wallet is lost — badly, painfully, at genuine personal cost — but with the underlying assumption that they can be found again. The 2008 financial crisis destroyed millions of jobs in finance, construction, and retail. But the underlying demand for those services remained. The world still needed bankers, builders, and shop assistants. The crisis was a demand shock, not a structural elimination. When the economy recovered, the jobs recovered with it. Not perfectly. Not equitably. But they returned.

This is not that.

When a software company decides to replace its junior developer cohort with Claude Code, the decision is not made because of a recession. It is not made because demand has fallen. It is made because the AI is cheaper, faster, and increasingly better — and those facts do not change when the economy recovers. They get more pronounced. The replacement is structural, not cyclical. The job is not waiting in a drawer for conditions to improve. It is gone. Architecturally, permanently gone.

Think about the travel agent. When online booking arrived, travel agents did not merely lose jobs in a downturn. The profession vanished entirely. When I arrived in England, the high street used to have travel agent shops with nice pictures on the glass fronts of people in exotic places. But, finding a travel agent on the high street is now like doing treasure hunt. Travel agents didn’t just vanish. Not partially. Not temporarily. The entire infrastructure of the profession — the high-street offices, the specialist training, the professional association, the career path from junior to senior agent, the tacit knowledge about which airlines overbooked, which hotels had quiet rooms, which tour operators could be trusted — dissolved. It did not return when the economy recovered. It did not return when travel demand surged. It had been replaced by a structural alternative that could do the work without the human, and the market made its calculation once and never reconsidered it.

AI is doing this — simultaneously — to dozens of professions. This is the scary and worrying part.

Not one industry at a time, slowly, over decades, allowing the workforce to adapt and migrate. All at once. Cognitively. Because the model is general enough — or general enough for the practical threshold of “good enough to do the job cheaper” — to apply the same displacement logic across law, medicine, finance, marketing, administration, coding, and customer service in the same half-decade.

The job is not lost. It has vanished.

And here is the number that belongs on the front page of every newspaper, not buried in a Goldman Sachs footnote: in the United States alone, in 2025 — not 2030, not in some speculative future, but in the calendar year that just ended — AI is estimated to have displaced or permanently foregone between 200,000 and 300,000 jobs. The official count, based on employer self-reporting, captured 54,836. The real number is four to six times that — because employers have rational incentives to label AI-driven cuts as “restructuring” or “efficiency measures,” and because the largest channel of AI displacement in 2025 was not layoffs at all. It was the quiet decision not to replace workers who left.

The jobs are vanishing in silence. That is the most important sentence in this section. They are not going out with a press release. They are going out the way a light goes out when nobody replaces the bulb — quietly, gradually, and only noticeable when the room is dark.

Goldman Sachs said 300 million. Conservative. They were modelling the foreseeable adoption curve. They were not modelling agentic AI at scale. They were not modelling the humanoid robotics pipeline. They were not modelling the compounding second and third-order effects of the Intelligence Displacement Spiral that Citrini described.

The real number is larger. Significantly larger. And the correct word is not “lost.”

The correct word is vanished.

The Intelligence Displacement Spiral

Citrini Research did something in their 2028 Global Intelligence Crisis report that almost nobody else has done: they followed the logic all the way to the end.

Most economists stop at displacement. They count the jobs that disappear, project the jobs that will be created by AI, subtract one from the other, call the result “net impact,” and present it in a way that implies the system will self-correct somehow with a little bit of pain. They are modelling a stable system with a shock, not an unstable system in structural collapse.

Citrini modelled the feedback loop.

Here is what it looks like.

AI improves. Companies adopt AI to reduce labour costs. White-collar layoffs increase. Displaced workers spend less. Lower consumer spending weakens businesses that rely on discretionary consumer demand. Those businesses respond by cutting more workers and investing further in automation to protect margins. The automation investment accelerates AI capability. AI improves. Companies adopt more AI. White-collar layoffs increase further.

The loop is self-reinforcing, and — this is the critical observation — there is no natural brake. In a normal recession, falling wages eventually make human labour cheap enough to re-employ. The price signal corrects. The cycle turns. But in an AI displacement cycle, the alternative to human labour does not get more expensive as human labour gets cheaper. It gets less expensive, because the AI is improving and the compute costs are falling. The human cannot compete on price against a technology that is simultaneously getting better and getting cheaper. The natural corrective mechanism is broken. I mean when Jense Huang says AI will become like an utility, like electricity, or when Sam Altman says AI will become cheap like electricity this is the silent part you supposed to figure out yourself.

So, Citrini illustrated this with a specific person. A senior product manager at Salesforce in 2025. Health insurance. 401(k). $180,000 a year. She lost her job in the third round of layoffs. After six months of searching — six months in a market that was already beginning to freeze at the professional level — she started driving for Uber. Her earnings have dropped to $45,000 and she is having to work overtime for this.

Multiply this dynamic by a few hundred thousand workers across every major metropolitan area. Overqualified labour flooding the service and gig economy, pushing down wages for existing workers who were already struggling. Sector-specific disruption metastasising into economy-wide wage compression.

This is not a job market correction. This is, at best, a cardiac event. And we are currently in the minutes just before the chest pains become undeniable.

The Maths Olympiad, The Bar Exam, and The End of IQ Premium

For most of the 20th century, IQ was the invisible mechanism behind elite professional sorting.

Nobody said it out loud at dinner parties. Nobody put it on a job advert. But it was the operating system beneath the credential. The reason investment banks recruited exclusively from Oxford, Cambridge, Harvard, and Princeton was not because those universities had better libraries. It was because those universities attracted, filtered, and certified the highest-IQ individuals — the ones who could hold the most variables in mind simultaneously, process the most information, reach the most accurate conclusions under pressure.

The premium careers — investment banking, hedge funds, quant trading, computer science, medicine, law, academic science, consulting — were, in their essential nature, IQ-premium careers. The salary was the price of cognitive scarcity. The credential was the certificate of that scarcity. The whole system was built on the assumption that the supply of very high IQ was limited, and that the economy would always reward it generously.

Suits — one of my favourite TV shows, not the garment — built nine seasons of drama on this premise. Harvey Specter and Mike Ross were compelling not despite their intelligence but because of it. The drama was the drama of exceptional minds operating in a profession that priced exceptional minds. The whole show is predicated on the notion that being the smartest person in the room is worth something even though if you didn’t go to Harvard officially. It is not an accident that the show is about lawyers specifically — a profession whose entire value proposition is: you are paying for my ability to reason better than the other side.

Now.

AI sat the Bar Examination. It passed.

AI competed in the International Mathematical Olympiad — the competition that represents the absolute peak of human mathematical reasoning, the event where the most gifted mathematical minds of each generation test the very limits of structured human thought. In 2025, Google’s system solved four out of six IMO problems, achieving a score that would have earned a silver medal at the competition.

Not a participation certificate. A silver medal.

AI is now solving mathematical theorems that have remained open for decades. In 2024, Google DeepMind’s AlphaProof contributed to progress on long-standing conjectures in formal mathematics. The AI is not just doing the work of the student. It is doing the work of the professor.

The IQ premium is being absorbed. Not gradually. Comprehensively. The careers that commanded the highest salaries because they required the rarest cognitive gifts are precisely the careers that AI has been trained — deliberately, strategically, publicly — to target first. The Maths Olympiad. The Bar Exam. The USMLE. The coding benchmark. The financial modelling test. These are not random demonstrations. They are a sequence of press releases aimed at every boardroom on earth.